William D. Nordhaus

Biographical

I

first saw light in Albuquerque, New Mexico, USA, at the dawn of World War II. My earliest memories are of the warm climate, skiing in winter, trout fishing in summer, and a fragrant alfalfa field outside my window.

How did I get from an alfalfa field in New Mexico in the 1940s to Stockholm in 2018? This essay recounts the intellectual history of my involvement with climate-change economics, or in the words of the Prize citation, “integrating climate change into long-run macroeconomic analysis.” I will concentrate on the people who taught me, the colleagues and mentors who inspired me, the bureaucratic obstacles encountered, the critics who nudged me toward improvements, and above all the pure joy of the half-century voyage of discovery.

My interest in the environment can be traced back to my father’s passion for skiing. Robert J. Nordhaus had joined the Tenth Mountain Division, troops on skis, and fought in the Italian campaign in World War II. When he returned to practice law in Albuquerque, he decided he needed to develop a ski area called La Madera (“the timber”) on the Sandia Mountains east of Albuquerque. When I describe this to friends as a “ski area in the desert Southwest,” they wonder just how crazy it was to build a ski area above a town that averages 10 inches of snowfall a year. As a boy, I once tried to predict future snowfall using some quixotic mathematics because I knew that the difference between snow and drought was between happiness and boredom. Skiing has been a most favorite sport of all lineal descendants of my father.

Figure 1. Nordhaus children, Gallinas River, New Mexico, 1948.

Photographer unknown.

Photographer unknown.

I am often asked how I became interested in the economics of climate change. Was it my boyhood passion for skiing, with its dependence on weather? Perhaps, in part. However, as Voltaire wrote, history is a fable agreed upon. I have no surviving diary of this period, so I will accept this origin story as plausible, much in the spirit of the founding fables of cultures that do not have written literature.

Before leaving skiing, it is worth using this as an example of the impacts of climate change and the offsets of technology. Because of global warming, the snowline in ski areas will rise about 1,000 vertical feet. If nothing else changes, my boyhood ski area of La Madera will no longer offer skiing.

Several technological developments more than offset the warming, however. The most important is “snow-making,” which allows production of snow at above-freezing temperatures from water through high-powered nozzles. The net effect of warming-plus-technology is that the coverage of slopes for many ski areas has actually increased rather than decreased in the last half-century.

Skiing is a good example of how “managed systems” can adapt to climate change and reduce damages. Much of the economy of high-income countries has similar adaptive capabilities. For example, those who study agriculture, such as MIT’s John Reilly, find that adaptation can reduce much of the negative impact of warming up to 2°C or so. Similar studies apply to some but not all of infrastructure.

However, there are large and important parts of the world that are “unmanaged” or “unmanageable.” Vulnerable unmanaged systems include rain-fed agriculture, seasonal snow packs, river runoffs, threatened species, and natural ecosystems. Ocean carbonization means that ocean crustaceans are doomed.

I grew up in a family of six. My older brother, Bob, was a lawyer and became a leading draftsman of energy and environmental legislation for the US House of Representatives; we like to think of him as the architect of the deep state. The second born, Dick, was more the artist and landed as a successful architect. I was the third boy. My parents were fortunate on the fourth draw, and I have a younger sister, Betsy, who has lived around the world and now resides in Israel with her extended family.

Much has been written about the importance of birth order, but my experience differed from the psychological formulas. I was the third of three boys. I don’t suppose I was a huge disappointment, but a third boy was probably unwelcome given that the first two were rowdy. My father, who in later years was a most beloved grandfather, in my early years was an Army captain with a German-Jewish background and a firm sense of who was in charge and should be called sir (he was). He commanded the first born, Bob, to be a lawyer (he did), to join the Army (he did), and to go to Yale (he refused).

By the time I arrived and was growing up during World War II, my parents were bored with boys. So, they paid the bills but largely ignored me. This family arrangement was heaven and set my course for the rest of my life. I came to believe that if I stayed within the bounds of rules and civility, I could do anything I wanted, fail or succeed, have fun or struggle, laugh or cry. I didn’t have to join the Army or be a lawyer, but in one of the great windfalls of my life, I did go to Yale.

In hindsight, Yale in the early 1960s was a paradise for minds coming to the age of abstract reason. I was especially interested in the humanities and social sciences, while the natural sciences held little attraction (which fortunately matched Yale’s comparative advantage). I spent the first two years in a program called Directed Studies (DS), which was an interdisciplinary program focusing on the classics of literature, art, and social sciences. The courses were taught by awesome teachers like Vincent Scully (history of art), Leonard Krieger (history), Victor Brombert (literature), and Richard Bernstein (philosophy). These scholar-teachers were role models for my future academic life.

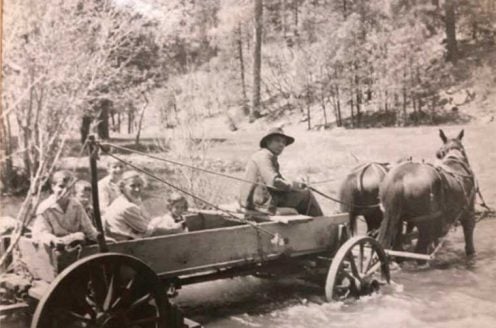

After two years, I became restless with Yale and decided to spend my junior year in Paris. That was another fortunate diversion. One sunny afternoon, at Café de l’Univers, I encountered my fellow student and future wife, Barbara Feise. We spent the year as flaneurs in Paris, where we studied and went to concerts together, she taught me about fine food, and I enticed her to ski. We continue to enjoy all these activities together today. When we moved to New Haven, Barbara began her career as a clinical social worker and then psychoanalyst at Yale’s fabled Child Study Center (in those days, the medical school’s equivalent of the economics department’s Cowles Foundation).

Figure 2. The High Line, New York City, 2009

Photographer: Larry Bercow.

Photographer: Larry Bercow.

The intellectual environment in Paris was a sadder tale. Paris, and Sciences Po where I studied, was still in the intellectual ruins of World War II and a backwater for economics. My teacher had not yet made it to Marxian economics but still taught Ricardian economics (with analytics from around the 1820s). But with all the other fascinating things to do, I just ignored my schooling for a year and soaked up the culture.

When I returned to Yale for senior year, I became serious about economics. I took macroeconomics with James Tobin, economic history with Henry Broude, and political economy with Ed Lindblom (using his book with Robert Dahl). When I graduated, I was thrilled to be admitted to the best graduate economics program in all the world and of all time, at MIT.

MIT was Arcadia for young economists. Teachers included Bob Solow, Paul Samuelson, Ken Arrow, and a host of others and they provided me the tools of the trade. But my real teachers were my fellow students. The ones I was closest to were George Akerlof, Bob Gordon, Bob Hall, Joe Stiglitz, and Marty Weitzman. I was fearful of looking stupid to Ken Arrow, but my fellow students were kind enough to pardon my offenses.

In tense times, I muse that being a married graduate student is the best stage of life. No responsibilities, no stress, no wails from the crib, no calls from the school principals, no employers haranguing you, no committees. But lots of time for talk and reflection and playing Go, and all this paid for by scholarships. After three and a half years of graduate study, I was done and ready to get a real job, and in 1967 I moved back to Yale as an assistant professor.

Evolution of climate-economy models

One of the key tools emphasized in modern economics, and in graduate programs such as MIT in the 1960s, is the importance of models. A model is a simplified, but not oversimplified, picture of a more complex reality.

The first model I built was of snowfall in New Mexico, and it was just a fantasy model. One of the joys of academic economics is model-building. I built models of railroad profits, of the US macroeconomy, of the patent system, of inflation, of productivity, and of induced technological change.

I then turned to energy models.

The 1970s were the high-water mark of modern Malthusianism. We were awash in books like The Population Bomb and The Limits to Growth, which predicted stagnation, the decline of living standards, and spreading famines.

One of the doomsday books was by MIT’s Jay Forrester, called World Dynamics and published in 1971. He was a computer genius, but I was too young to care and was appalled by the lapses in Forrester’s study and the later famous offspring, The Limits to Growth. I then built and published in 1974 a model of the Forrester model to show that the results were highly sensitive to many assumptions and that any of several alternative assumptions would lead to continued growth in living standards. History was not kind to the predictions of any of these studies, and Malthusian thinking proved as inappropriate to the late 20th century as it had been in the early 19th century.

My other response to the flaky Limits literature was to take it seriously. Clearly there are limited resources of oil, gas, copper, and clean air. Just as clearly, technological change is finding substitute processes that replace scarce resources with superabundant resources. Perhaps the best example is fiber optics (light plus silicon) as a substitute for copper wire. The quadrillion-dollar question is whether technology will outpace depletion.

It was here that working at Yale sent me down a path that was different from the standard economic approach. Much brilliant work of this time relied on econometric analysis and modeling. Colleagues at Yale, particularly Tjalling Koopmans and Herbert Scarf, persuaded me that better methods were activity analysis (whether linear programming or LP, which I started with, or computable general equilibrium or CGE, which came later).

These approaches combine the fundamental constraints of resource availability (of oil, gas, coal, uranium, and so on) with society’s preferences to determine the efficient path of resource extraction and depletion. Along the way, they also compute the efficiency prices, such as the price of oil. Theoretical studies showed that, if calculated correctly, the efficient outputs and prices of the LP solution correspond to the outcomes of competitive markets, this being “the correspondence principle.”

Using the programming approach to energy systems, I developed a model called the “Bulldog model,” named after the mascot of Yale. The model found that the efficiency price of oil was close to the actual market prices of the 1960–1972 period. The model suggested a gradual transition from oil and gas to coal and synthetic fuels and then to superabundant resources, which were then thought to be nuclear power.

I was assisted by a brilliant Yale undergraduate named Paul Krugman and planned to present and publish a paper with the results in the fall of 1973. Then, in October 1973, the first oil crisis erupted. Energy markets went haywire, oil prices went up 300%, other resource prices followed upward, inflation soared, stock markets tanked, recessions followed, and the world entered the Era of Scarcity.

The energy crisis

It is difficult today to reconstruct the mindset of people in the mid-1970s. Energy prices rose sharply, there were long lines in gas stations, many advocated wartime rationing of gasoline, and it was hard to find staples like toilet paper. Newsweek had an issue with an empty cornucopia and a cover, Running Out of Everything. The economic crisis was shadowed by the American political crisis of the collapsing Nixon administration, followed shortly by a Carter administration that foundered in another energy/oil crisis, inflation, sky-high interest rates, and a sense of malaise.

Figure 3. Cover, Newsweek magazine, November 1973.

But the fortunes of economists are counter-cyclical. For a young energy economist working in the 1970s, there was music in the cafés at night and revolution in the air (Dylan 1975). My work on energy convinced me that the crisis was primarily one of a mismanaged energy regulatory system and a rigid capital stock. It was definitely not the Limits or Newsweek scenario of running out of everything. I reviewed the issues in a short paper on resources as a constraint on growth in 1974. I concluded that energy and other subsoil resources were unlikely to be major constraints, nor were environmental problems on a national level.

First stirrings of global concerns

However, global environmental issues were a different problem. I first encountered global environmental issues in a visionary 1970 MIT report by a group of scientists, Man’s Impact on the Global Environment: Assessment and Recommendations for Action. This report stirred my interests in global environmental issues, and my next research took up the issues outlined in that report.

I went back to reread the 1970 report to prepare the present essay. Because we know so much today, it is hard to remember how little was known in 1970. There was only one estimate of the impact of rising levels of carbon dioxide, CO2. Specialists thought the globe was cooling and that rising levels of particulates would further exacerbate cooling. The first study of the economics of climate change examined the impact of cooling, not warming. The only alternative to fossil fuels was thought to be nuclear power, which was viewed by many scientists with suspicion. It was many years later, perhaps only in the 1990s, that the measured changes in climate began to match the model projections.

Until that time, climate change was based on fundamental science, and only in the last two decades has the science been validated by observations.

A waltz to Vienna

My life took an unexpected and fortunate turn in the summer of 1974 when our family spent a year in Vienna at IIASA, the International Institute of Applied Systems Analysis. IIASA was an international research organization, with scholars from both sides of the Iron Curtain, devoted to global problems of mutual concern. It attracted a group of talented scientists and economists and was led by Harvard’s Howard Raiffa, a pioneer of decision theory. We thought Vienna was the last romantic city left in Europe, and that was half-true, with beautiful music but a gritty environment.

I was encouraged to go to IIASA by Tjalling Koopmans, and he was a source of inspiration and support throughout this formative period. My group was devoted to energy studies and included an analyst and algorithmist (Alan Manne from Stanford), a font of futuristic ideas (Cesar Marchetti), and a young economist from Belgrade with whom I have co-authored four books (Nebojsa “Naki” Nakicenovic).

Scholars shared offices at IIASA, and by lottery I joined Allan Murphy, a distinguished climatologist from Oregon State University. We shared stories about our interests, and he encouraged me to think about the impact of the economy on climate systems. Thus, began my study of the economics of climate change.

I was familiar with economics from my own studies and from having taught the entire subject (macroeconomics and microeconomics) at the introductory, intermediate, and graduate levels at Yale. But I knew nothing about the scientific aspects of climate change, zero. I set out, beginning at IIASA, to learn about the relevant sciences. I later thought it was fortunate I had not studied the sciences in school because they would be outdated, like the biology course I took in 1958 that did not mention DNA. My self-taught courses involved the writings of scientists like M.I. Budyko, Lester Machta, Suki Manabe, H.H. Lamb, and Charles Keeling. It then involved tutorials, when I was on committees of the US National Academy of Sciences, from leading lights such as Roger Revelle, Joseph Smagorinsky, Stephen Schneider, Jesse Ausubel, Paul Waggoner, Ram Ramanathan, the polyglot economist Tom Schelling, and a young star environmental economist, Gary Yohe.

The IIASA model

The first step in integrated modeling of climate change was actually quite simple. It involved beginning with the Bulldog energy model, adding emissions of CO2 (a simple set of linear equations), and then adding a Markov distribution matrix among seven carbon reservoirs (following the work of Lester Machta). We used a high-end LP program in that period’s large computer and stacks of IBM cards. After a couple of months of programming, clearing computer jams, and fixing bugs and mistakes, we ended up with the first integrated assessment model of climate-change economics.

While the modeling was simple, I encountered a major obstacle. The leader of the energy program was a nuclear physicist and a fierce proponent of breeder nuclear-power reactors as a long-run solution to energy scarcity. Breeder reactors are a second-generation design that produces as much fissile material as it uses. It was a complete economic dead-end because of high costs and the subsequent decline in first-generation reactors, but the breeder has a certain expensive elegance.

When I described my proposed research program to include CO2 to the leader, he ordered me to stop the work. It was not, he declaimed, on the work plan of the energy project, i.e., on his work plan. It was obvious to me that taking climate seriously would be an advantage to nuclear power, but he did not see that yet, so it also was not in his work plan.

In skiing, if you see a bare spot, you go around it. So too with the leader. I went around him to the director of IIASA, Howard Raiffa, and explained the problem. Being a deep advocate of undirected research, he simply assigned me to another program, on methodology, where I would find encouragement. It left some residual bitterness between me and the leader, but the work was not slowed more than a couple of hours.

This research produced the IIASA model, which was an early integrated analysis, including projections of atmospheric CO2. Because of the LP approach, it allowed analysis of constraints (such as limits on CO2 concentrations). Most interesting were the results for shadow prices, and which eventually were interpreted as carbon taxes.

The concept of shadow prices originated in the mathematics of Lagrange and later in the Economics Prize-winning work on linear programming of Kantorovich and Koopmans. We are accustomed to market prices (such as the price of gasoline), which represent the costs to producers and the value to consumers. Shadow prices are the social equivalents, that is, the social costs and values, but they are costs that are not captured by markets because of externalities. In climate change, the shadow price of CO2 emissions is the social cost that is not reflected in the price that we consumers pay for our emissions.

Our energy/climate models calculate the shadow prices of CO2 emissions.

The shadow price indicates the cost to the economy of an extra ton of emissions; perhaps the shadow price is $40 per ton of CO2. The correspondence principle states that, in a properly functioning economy with no externality, the market price would be $40 per ton. The $40 price would reflect the value to consumers and the cost to producers of the last unit.

I knew about shadow prices from work on the Bulldog energy model, but at first I was puzzled by the shadow prices on CO2 in the IIASA model. Eventually, I figured out that the CO2 shadow price is the social cost of CO2 emissions. When the study was published in 1977, I explicitly recognized this as a fiscal question, interpreting the shadow price as a carbon tax to implement policy on a decentralized level. This idea lay fallow for many years and then resurfaced as the “social cost of carbon,” a concept that is now central to climate policies.

The IIASA study was my first attempt at integrated-assessment models (IAMs) of climate-change economics. It had two major shortcomings.

One was that it was a partial-equilibrium model; that is, it took the overall conomy and interest rates as given. Second, it did not really close the loop because it had no damage function. Rather it imposed an artificial constraint on CO2 concentrations, or in a later version on temperature. This leads to an interesting question of the evolution of temperature limits.

Two degrees of modeling

While the IIASA study in 1975 contained no estimates of damages, it did attempt to close the loop from emissions to policies by considering different approaches to setting standards for the control of CO2. It noted that there was no serious work on standards, and that standards need to be set in terms of society’s technology and preferences.

The resolution was in retrospect a wild guess at a standard: temperature limits. I wrote that as a first approximation temperature increase should be kept within the normal range of long-term climatic variation. Studies at that time (1975) suggested that a temperature increase of more than 2 °C would take the planet outside the range of temperatures experienced over the last several hundred thousand years. Since the IIASA model did not have a temperature module, I estimated that a 2 °C target was consistent with a doubling of CO2 concentration, so that was used as a proxy for temperature increase.

At the same time, I emphasized that this was the starting point, not the ending point: “It must be emphasized that the process of setting standards used in this section is deeply unsatisfactory, both from an empirical point of view and from a theoretical point of view.” (Emphasis in original)

I will not follow the subsequent discussion of temperature targets, which is beautifully described in a paper by Carlo Jaeger and Julia Jaeger. The target of 2 °C was endorsed in the 2015 Paris Accord and is the aspirational standard around the world. My reservations have not changed over the years. As I wrote four decades later, “We cannot realistically set climate-change targets without considering both the costs of slowing climate change and benefits of avoiding the damages.”

From early stirrings to the dice model

Over the next two decades, I moved from model to model like a pilgrim trying to reach the Promised Land. The main goal was to fix the two major flaws in the IIASA model. These were to develop a general-equilibrium framework, and the second was to develop the modules of the climate externality.

Accomplishing these two goals took nearly two decades, and what finally emerged was the DICE model (Dynamic Integrated model of Climate and the Economy). The first major study was rejected by economic journals but accepted and published in Science in 1992.

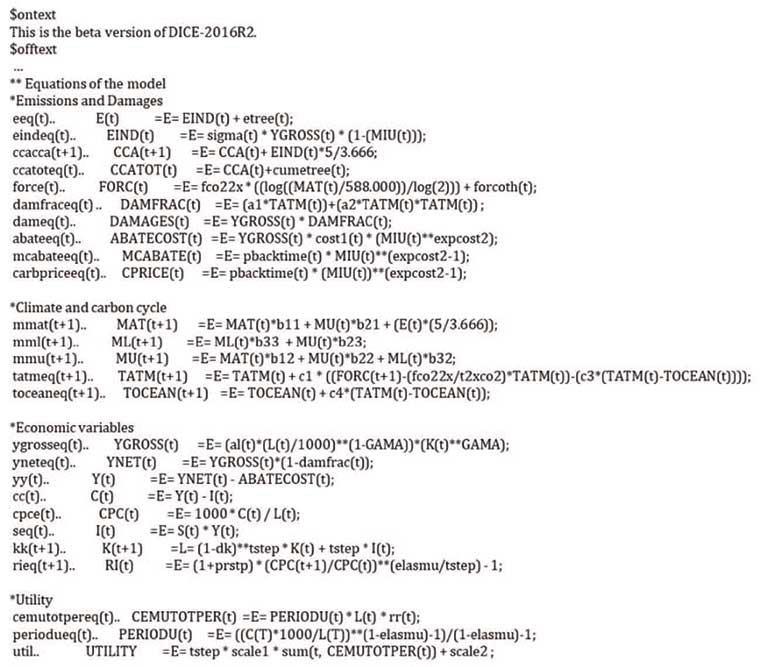

Figure 4. Computer code of DICE model

Photograph by author.

Photograph by author.

I have always loved the name of the DICE model. It is easy to remember and visualize. DICE also conveys a shiver of risk and danger. It alluded to the Faustian bargain that we make as we continue down the path of unchecked climate change, the Walpurgis Night of reveling without reckoning on how the devil of damages will come to drag us to a hellish future.

The first major change was to scrap the large energy model and move to a Ramsey model of optimal economic growth. I learned growth theory from Sukhamoy Chakravarty, Karl Shell, and Bob Solow at MIT. But writing code for a computerized model is a different animal than solving differential equations. That step had been taken by Alan Manne, Rich Richels, and others for a standard economy without climate starting in the early 1980s.

But I wanted to have a closed system, which required developing, estimating, and programming the externality equations along with the Ramsey model. The required externality equations were for emissions, a carbon cycle, a climate module, a damage function, and an emissions-control variable. None of these five major modules existed in a way that was suitable for an economic model to solve the climate problem in its fundamental form. Finding satisfactory modules was the pilgrimage that took two decades.

Rather than going through the evolution of each, I will take the examples of the climate and damage modules. For climate, the issue is how to go from the path of CO2 concentrations in each year to the path of global mean temperature in each year. Climate models do this, but they have hundreds of thousands of variables and take a month to solve on a supercomputer. Moreover, they are recursive, and the economic approach is optimization, which is computationally much more complex. I needed a few climate equations, certainly less than a dozen, not a dozen thousand.

I stumbled through several iterations. I would ask climatologists, but they generally were not interested in economics or in simple models. I would try simple empirical equations, but they would fit poorly and were methodologically unsatisfactory. Moreover, I wanted something that was not only simple but acceptable to the climate community.

It was then, around 1990, that I encountered the dazzling scientist-advocate Stephen Schneider, at NCAR, then Stanford. When I told him what I needed, he said that he had just what I needed. It was the Schneider-Thompson model, which was a two-equation climate model. I studied it, decided it was the right scale, and adopted it in 1992. The same basic structure, with different parameters, has been part of the DICE model since then. The genius of the Schneider-Thompson approach was to rely on radiative forcings, or the earth’s heat balance, rather than CO2 concentrations for climate change. This allowed closer integration with other high-resolution models over the years. Steve died in 2010 of a vicious form of lymphoma, but I send him a silent greeting and murmur of thanks whenever I think of him.

Each of the other major modules has its own story. The other difficult one was the damage function. Damage functions originated in environmental economics in the 1970s. Estimates of damages from climate change began to appear in the 1980s, and by 1990 it was possible to make estimates of damages along a climate path. My earliest estimates were that damages were a linear function of output and temperature, and later I changed this to quadratic, which is the form that has survived to this date.

Some of the most impressive work on damages has been done by my Yale colleague Robert Mendelsohn. He and I did the first paper using the controversial Ricardian method for measuring damages in US agriculture, and he and others have applied that approach more widely. This approach solves a key problem, which is to distinguish climate from weather. Some of the current approaches forecasting high damages, often cited by alarmists, rely on weather shocks, which are entirely different from climate change.

Another persistent issue in the damage function is the treatment of catastrophic impacts. This question was highlighted by Harvard’s Martin Weitzman. He argues that the likelihood of extreme outcomes might dominate the analysis because of “fat tails” in the distributions. We see fat tails when the stock market goes down 23% or when a Japanese earthquake is many times larger than any in the recorded history of Japan. Under a normal distribution, it is extremely unlikely to see a seven-foot-tall man walk through the door (perhaps one chance in a million). In a fat-tailed distribution, we might occasionally see a twenty-foot person. Similarly, for climate change according to Weitzman, we might have catastrophic changes that would be civilization-ending. The point, emphasized by Weitzman, is that fat tails would make DICE-type analyses irrelevant for climate policy because it would be looking at weather forecasts in the last minutes of the Titanic.

Arguments about damage functions have been going on for almost three decades, and they are just as heated today as in the earliest times. If anything, further research has increased rather than reduced the uncertainties.

Since 1992, the DICE model has spawned many versions as well as spin-offs. It has been extended to a regional model (RICE, joint with Zili Yang), a probabilistic model (joint with David Popp), a model with induced innovation (R&DICE), and one to understand climate clubs (Coalition-DICE). Former students such as Lint Barrage (now at Brown) have pushed the boundary to include other issues, such as taxation. It is used around the world, and I get emails virtually every day from scholars or college students asking about its structure or critiquing its assumptions. My friend and colleague Martin Weitzman has remarked that updating the DICE model will provide lifetime employment until we solve the climate problem, which means lifetime employment.

From dice to climate clubs

All economists who have worked on climate change knew it was “The Hard Problem” of economic policy. Global public goods are difficult because there is no market or political mechanism to solve them, and climate change is the Colossus of all global public goods. Despite the best efforts by economists, scientists, and politicians to forge effective international agreements, progress on this Hard Problem has been slim. The effective global carbon tax in 2018 is about one-tenth of my estimated target, and perhaps one-one-hundredth of the Stern approach.

The reason for the failure is clear, as has been emphasized by the work of Columbia’s Scott Barrett. Our climate agreements are ones without penalty for non-participation or non-compliance, so the result is that there is free-riding and minimal abatement. This is the lesson of economic theory and environmental history, and it has been proven accurate for climate agreements.

While I was generally familiar with work in this area, the failure of climate agreements was becoming clear as the Kyoto Protocol was dying in the 2010–2012 period. I remember exactly when the neurons connected. I was discussing the Greek economic crisis in my macroeconomics class, and I wondered, “Why does Greece stay in the Eurozone? Why not just pull out, and perhaps out of the EU as well?”

The answer to the Greek conundrum was clear. The EU is a “club” with dues and privileges. The dues are adherence to the rules, such as regulations and rules on democracy; the privileges are enjoyment of an enormous borderless economy, with free trade in goods, services, and finance. I began to study the theory of clubs, look at other political clubs such as NATO, the United States, and the World Trade Organization. Each of these has the same structure – dues and privileges. The successful ones have benefits greater than costs as well as coalition stability. The unsuccessful ones (such as the League of Nations, the Kellogg–Briand Pact that outlawed war, and the Kyoto Protocol) were ones where free-riding and low benefits led to their demise.

Building on other work, I developed the idea of a “climate club” in my 2015 presidential address to the American Economic Association. An important aspect of the climate club, and a major difference from current proposals, is to create a strategic situation in which countries act in their self-interest to enter the club; they undertake high levels of emissions reductions because of the structure of the incentives. In the language of John Nash’s beautiful theory, change the structure so the non-cooperative strategy yields an equilibrium with high participation.

Although it is easy to design potential international climate agreements, the reality is that it is difficult to construct ones that pass two tests: to be effective and stable. Effective means abatement that is close to the level that passes a global cost-benefit test. Coalition stability means that no country or group of countries can improve its welfare by changing its participation status. Existing approaches from Kyoto to Paris fail these important tests.

The proposed climate club is simple: A coalition of countries form a group, similar to the EU or the WTO, in which they agree to set a target of a minimum domestic carbon price of, say, $50 per ton. Countries who decline to join the club are penalized with duties on imports into the club of, say, 3% on all imports. This is conceptually the same structure as the European Union.

I built a model of coalitions (the Coalition-DICE model) to test different carbon prices and tariffs. The major empirical findings were these. First, a club with a zero-penalty tariff would have no members (which is true of the Kyoto Protocol). Second, for prices up to $50 per ton of CO2, modest penalty tariffs of less than 5% would induce most countries to participate (as they do in the WTO). It cannot be proven mathematically, but it seems clear that some version of a climate club is our only hope for inducing widespread participation and abatement in a strong climate treaty.

In December of 2018, Barbara and I were joined by our children (Jeff, Monica, and Rebecca), son-in-law (Will Curry), grandchildren (Annabel, Margot, and Alexandra), family (Barbara’s brother Chris Feise and my nephew Ted Nordhaus), and close colleagues (Jesse Ausubel, Lint Barrage, Lars Bergman, Nebosja Nakicenovic, and Gary Yohe) in Stockholm. This was a wonderful celebration of our many decades together.

Figure 5. Stockholm, December 10, 2018

Photo: Alexander Mahmoud

Photo: Alexander Mahmoud

Looking backward and looking forward

In Edward Bellamy’s futuristic 1887 novel, Looking Backward, Julian West wakes up 113 years later in Boston to survey the society in 2000. He finds a socialist society, complete equality, no pollution, and nationally owned industry. The economy is managed much like an idealized version of Soviet central planning. Money has been replaced by cardboard credit cards that have punch holes like IBM cards. By 2000, the well-oiled machinery produces a cornucopia of nineteenth-century products.

The economic fantasies in Looking Backward are basically all wrong. Bellamy’s vision did not foresee air travel, nuclear weapons, computers, the Internet, cyberwarfare, the miracles of modern medicine, or climate change. The vision of comprehensive central planning collapsed with the wall in 1989. Even with modern computers, the economy is too complex to be managed by the largest and most idealistic of hierarchies. Instead, countries have found the formula for prosperity in the mixed economy: the rule of law and government support for basic science, alongside profit-oriented research and innovation of the market.

Looking Backward reminds us of the profound difficulty of predicting the structure of our societies far into the future. If we go to sleep today and wake up at century’s end, what will we find in 2100? Will it be a dystopian landscape where the successors of today’s thuggish leaders find new tales to spin about climate change? Will ocean crustaceans be a footnote in the cookbooks? Will the Western forests of the United States be replaced by char and savannah? Will Weitzman prove a prophet as the outlier events are realized and are much worse than we imagine today?

It need not be so. We should instead gather strength from our valiant high-school science teachers, our great research universities, and forward-looking scientific prizes. We should insist that the data-based findings and theories of natural and social scientists replace fake facts and false narratives. We should persuade nations to look to the EU, the WTO, and similar club-like organizations as models for global governance in human rights, non-proliferation, and climate control. The emissions and temperature curves then might point down rather than up.

Our futures are not in the stars but in ourselves. I will not be here in 2100 to witness the results of our efforts. However, my grandchildren are likely to be here, with their children and their grandchildren. May they be looking backward with appreciation. I hope they will say that this generation had the resolve to overcome the obstacles and take the steps necessary to preserve our unique and beautiful planet.

This autobiography/biography was written at the time of the award and later published in the book series Les Prix Nobel/ Nobel Lectures/The Nobel Prizes. The information is sometimes updated with an addendum submitted by the Laureate.