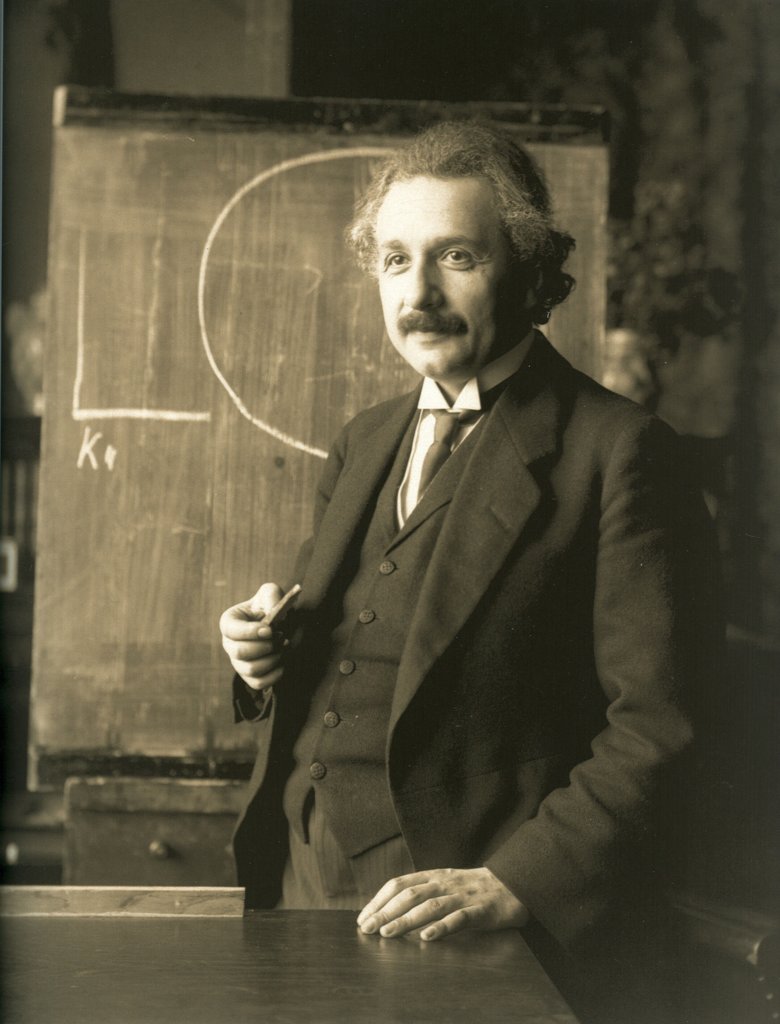

Albert Einstein

Nobel Prize in Physics 1921for his services to Theoretical Physics, and especially for his discovery of the law of the photoelectric effect.

Learn more about how the photoelectric effect has shaped technologies such as burglar alarms, solar panels and the camera in your smartphone.

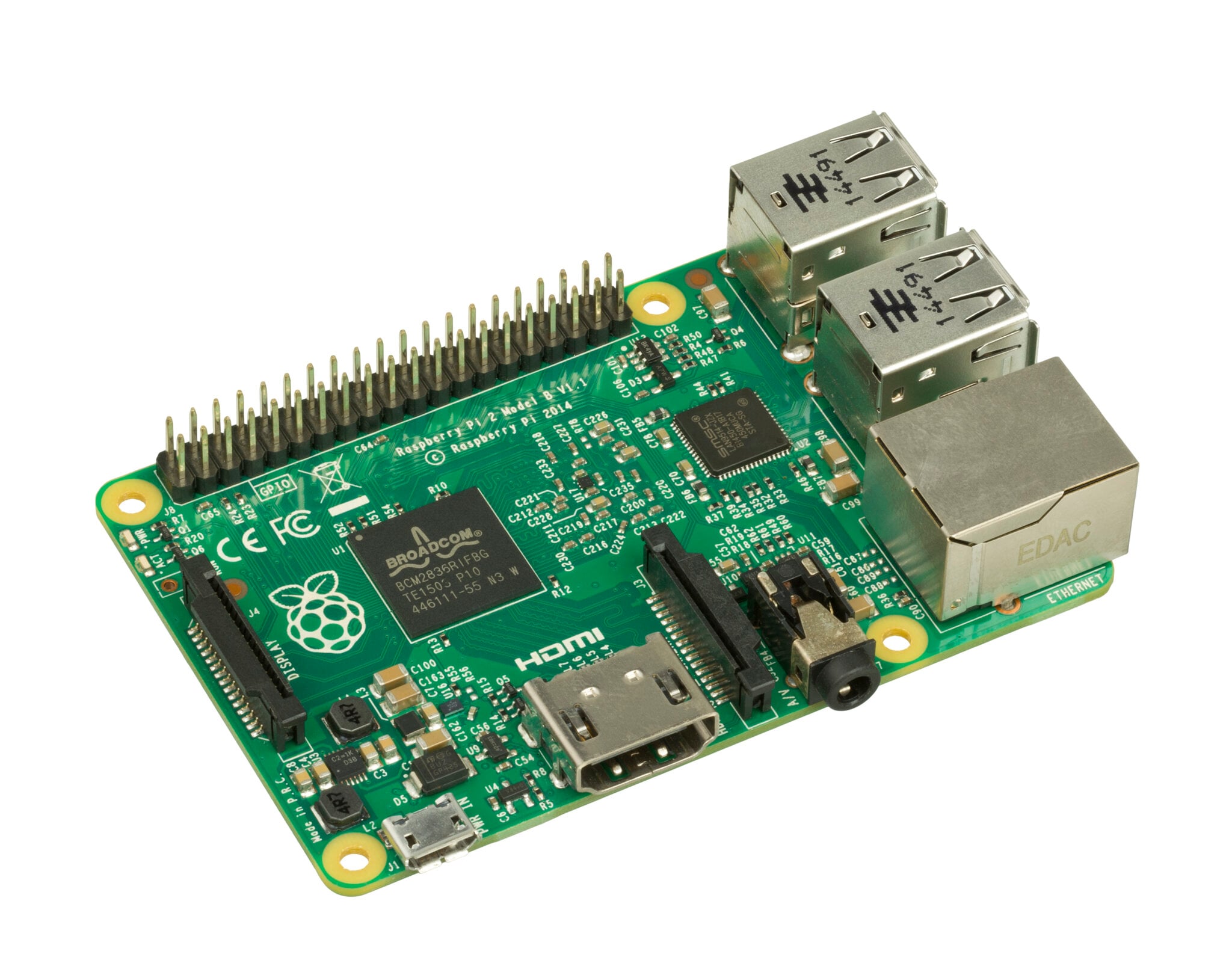

The engineer picked up a camera flash gun, aimed it at the tiny circuit board computer on the desk, and fired. For a fraction of a second, light flooded the room. Everyone blinked – and saw that the computer had crashed.

“We all had fun crashing it,” recalls Eben Upton, founder of Raspberry Pi. They had realised that a chip on the computer was susceptible to the photoelectric effect – when light triggers the release of electrons, and thus an electrical current. A kind of reverse “light switch”, if you like.

Upton and his colleagues had not anticipated this problem. It was discovered by a Raspberry Pi 2 user less than a week after the device went on sale in early 2015. In subsequent versions of the computer, the troublesome chip featured a black coating thick enough to soak up incoming light.

More than a century earlier, Albert Einstein had described the photoelectric effect in a ground-breaking paper – one of four seminal papers he published in 1905 while working as a clerk in the Swiss patent office. Later, in 1921, he received the Nobel Prize in Physics for it.

The photoelectric effect has gone on to shape all kinds of technologies – from burglar alarms to solar panels and the camera in your smartphone.

To understand it better, consider the question that gripped Einstein back in 1905: what is light made of?

At the time, many scientists theorised that light existed purely as a wave, which some suggested travelled across the universe in an intangible “light-bearing ether”. But to Einstein, this idea seemed ridiculous – “like Father Christmas”, says Steve Gimbel at Gettysburg College in the US.

Scientists including Heinrich Hertz had already demonstrated versions of the photoelectric effect by using light to generate tiny sparks, or to electrically charge pieces of gold leaf, causing them to repel each other.

“There were certain weird, unexplained phenomena where light could create electricity and that just blew people’s minds – that seemed to make no sense,” says Gimbel.

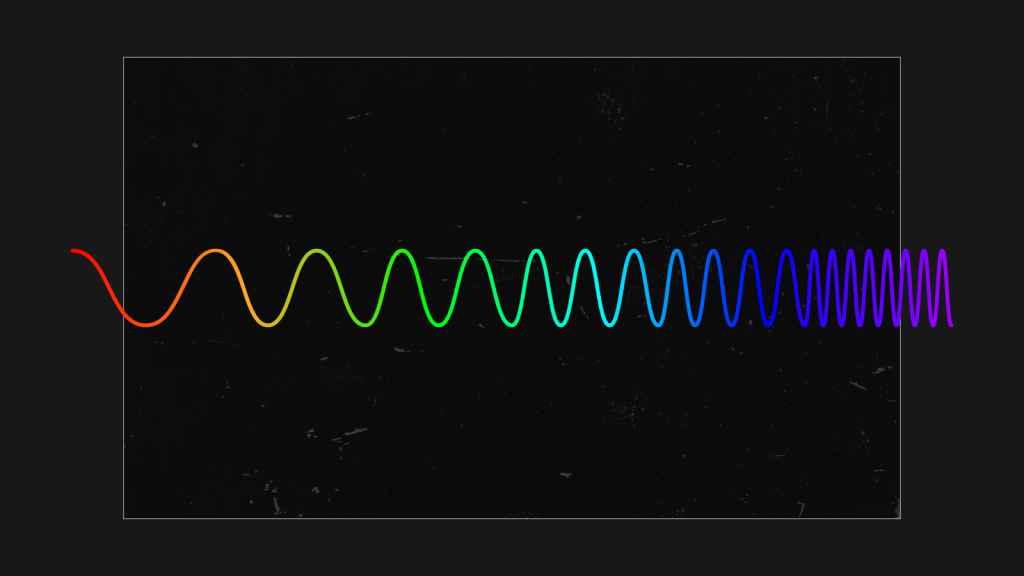

The weirdest thing was that the intensity of light didn’t affect the energy of the electrons produced whereas the frequency, or colour, of the light did. This was mind-boggling. More light should mean more energy, right?

Well, Einstein realised that if light wasn’t just made up of waves but also discrete packets or particles (which later came to be known as photons) travelling in waves, then it could be that the energy of those individual particles would explain this.

“When a single photon hits an electron, it [the electron] gets excited,” explains Paul Davies, at the University of York. So long as that photon lands with enough energy, then the photoelectric effect occurs – and the electron is freed from the material.

Think of it like throwing tiny sticks of dynamite into an open barrel of cannonballs. The little explosions won’t be enough to knock out a cannonball, no matter how many times you fling one in. But if you use stronger dynamite, with more energy, that will make the cannonballs fly.

The energy value of a photon is directly related to the colour of visible light – photons in blue light travel on shorter waves and have more energy than those in red light, for instance. That’s why Hertz found that especially energetic ultraviolet light would produce stronger sparks during one of his experiments.

Gimbel stresses that Einstein didn’t come up with this theory out of nowhere. He drew not only on work by Hertz and others, but also on physicist Max Planck’s theory of “quanta” – the idea that radiation, including light, consists of discrete packets of energy, for which Planck also received a Nobel Prize in Physics, in 1918. But in 1905 this concept was still controversial.

“Einstein had this revolutionary mind where he was willing to consider other approaches,” says Gimbel. “He took seriously this idea that light could be quantised.”

Scientists have long debated whether this was the best choice but there is little doubt that harnessing the photoelectric effect has changed the way our world works, since so many technologies rely on it.

Motion sensors in burglar alarm systems, for example, emit a beam of infrared light. When this beam is interrupted by an intruder, the light received by the sensor changes, altering the electrical current – and that sets off the alarm.

Finish lines at races held in the Olympic Games have used photoelectric cells to detect exactly when runners cross. Such technology has allowed ships to sense fog, and automatically switch on foghorns. It has also enabled cars to turn on their windscreen wipers spontaneously when it rains.

Strictly speaking, the photoelectric effect refers to a phenomenon in which electrons escape a material – but Davies says this is closely related to the photovoltaic effect, where movement of electrons facilitates an electrical current flowing through adjacent materials.

That’s what solar cells in solar panels do when they turn sunlight into electricity, contributing clean, renewable energy to electricity grids and tackling climate change.

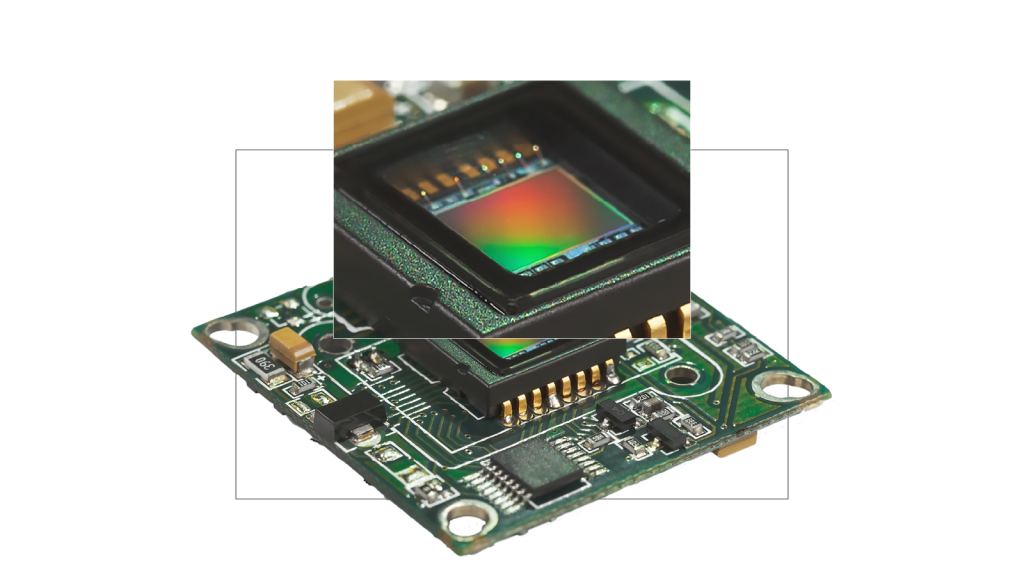

Another popular application of the photoelectric effect is in camera sensors, the light-sensitive part of a digital camera that captures images. Nearly all use CMOS technology, which was fine-tuned at Nasa in the 1990s for use in space, but came to be installed on billions of smartphones. “The CMOS image sensor was the perfect device, let’s say, for that. It turned out to be the killer application,” says engineer Eric Fossum, who worked on the project.

Silicon is the key material used in CMOS sensors and Fossum, now at Dartmouth College, notes that the photoelectric effect in silicon is triggered by many colours of light.

“It doesn’t matter whether it’s green light, red light, or blue light – a photon will liberate exactly one electron. We’re kind of lucky that way.” This really helps when you want to capture a subject’s colour in full detail.

Now, Fossum and colleagues are working on image sensors sensitive to the smallest imaginable amount of light – a single photon. These devices, also known as photon-counters, are already used for laboratory experiments but they could also revolutionise digital imaging technologies, for example by improving image quality in medical CT scanners, and exposing patients to less radiation. The potential applications don’t stop there. “We’ll have the capability to practically see in the dark with this new technology,” says Fossum.

Another scientist working on devices that harness the photoelectric effect is Dimitra Georgiadou at the University of Southampton. She and her colleagues are developing technologies that can detect light and process information about it without having to send data to a central computer system for analysis. “This reduces significantly the amount of energy it needs,” says Georgiadou.

This might help researchers develop highly advanced bionic eyes and give sight to blind people by enabling the design of smaller, easier to implant, and more energy-efficient devices. It could also enable self-driving cars to make faster decisions about when to brake for safety reasons.

The light-sensing technology Georgiadou is focused on does not rely on silicon but rather organic, carbon-containing, materials – these can be tuned to respond only to specific colours of light, and also printed on flexible substrates.

Such technology could turn up in wearable, low-power light sensors able to track the heart rate and blood oxygen levels of premature babies, for example, by shining small amounts of light through their skin and into their veins.

Since Einstein wrote down his theory on the photoelectric effect in 1905, we’ve certainly come up with a lot of fun things to do with it. But there’s more. Understanding this incredible interaction of light and matter has revealed curious details about the way the universe works.

In the 1960s, some of the earliest moon landers took pictures of the lunar horizon and noticed something strange: a weird glow, almost like a gently fading sunset. Except that the moon doesn’t have an atmosphere like Earth’s, and it’s the scattering of light by particles in our atmosphere that creates sunrises and sunsets as the planet turns on its axis.

Where was this lunar glow coming from? It turned out that light from the sun was striking dust on the moon’s surface and, through the photoelectric effect, giving it a positive electrical charge.

These little dust particles thus repelled each other, periodically levitating above the lunar surface. As they did so, they caught the light of the recently set sun – and created that magical glow.

By Chris Baraniuk, BBC World Service. This content was created as a co-production between Nobel Prize Outreach and the BBC.

Published May 2026

A chance discovery made around 80 years ago paved the way for Mary Brunkow’s painstaking work, which contributed to redefining how the immune system functions. From investigating an obscure gene dismissed as ‘junk’ by other scientists, to choosing to opt out of academia and conduct her research at a biotech startup, Brunkow has embraced the unexpected throughout her career.

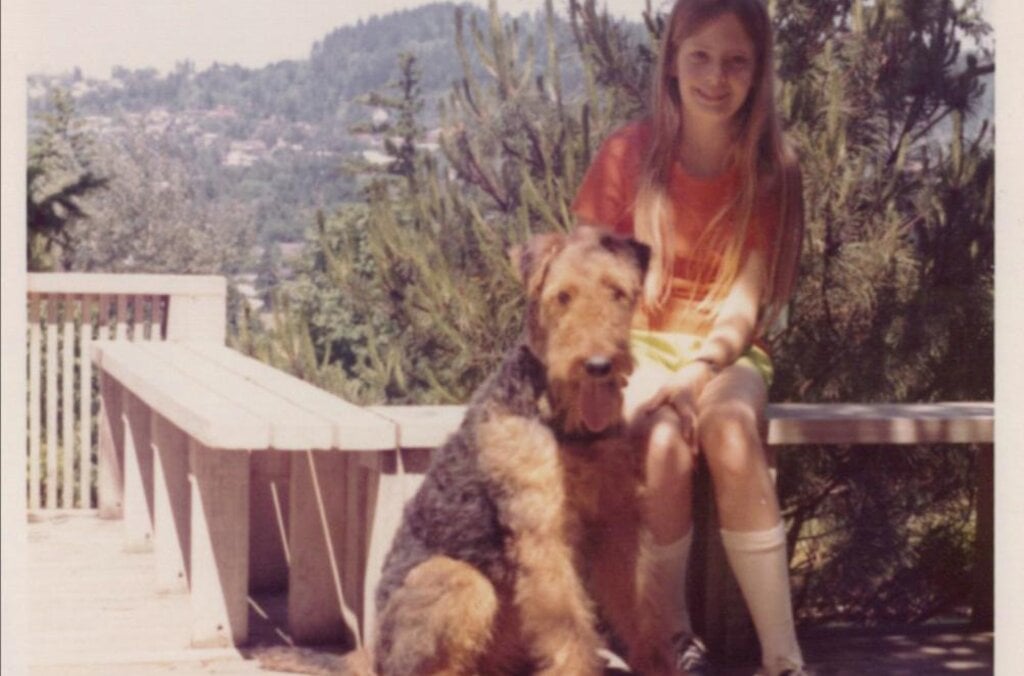

Mary Brunkow was born in 1961 in Portland, Oregon, the middle child of three. In high school, she enjoyed basketball and ran for the track and cross-country teams, but was, by her own omission, pretty bad at both. She was, however, a good student with a love of maths and science, especially genetics.

Brunkow longed to go to university in a big city and, after graduation, started on the pre-med track at the University of Washington. She was inspired by her paternal grandfather who was a doctor and surgeon, and had a strong desire to help people. However, after taking a genetics class in her final year at university and getting her first taste of lab work, she realised research was where her future lay. “The minute I walked into that research laboratory, it was just an environment that really struck me,” she said.

“I like the idea of something really concrete and coming up with a discovery that proves something.”

Mary Brunkow

The lively and collaborative atmosphere of the lab stirred Brunkow to continue her studies at Princeton University as one of the first graduate students to join its department of molecular biology. She made the brave decision to focus on a seemingly obscure gene dismissed as ‘junk’ by other scientists for her PhD, before carrying out postdoctoral research at the University of Toronto.

While her fellow postdocs stayed in academia, Brunkow wanted to do something with a more direct application to human health. It was an exciting time in science. The Human Genome Project – an ambitious international research effort aimed at deciphering the chemical makeup of the entire human genetic code – was running at full speed and new genomics-related technologies were taking off. Her postdoctoral research over, she decided to take another unexpected path, joining a young biotechnology company in a Seattle suburb that developed pharmaceuticals for autoimmune diseases. According to Brunkow, the competition and self-promotion she had seen in academia was absent. “Everybody was working towards the same goal of finding new drug targets that would eventually be hopefully developed into drugs,” she said. There, Brunkow learned of a mouse genetics programme set up following the Second World War to understand how the effects of radiation might impact human health and alter genes.

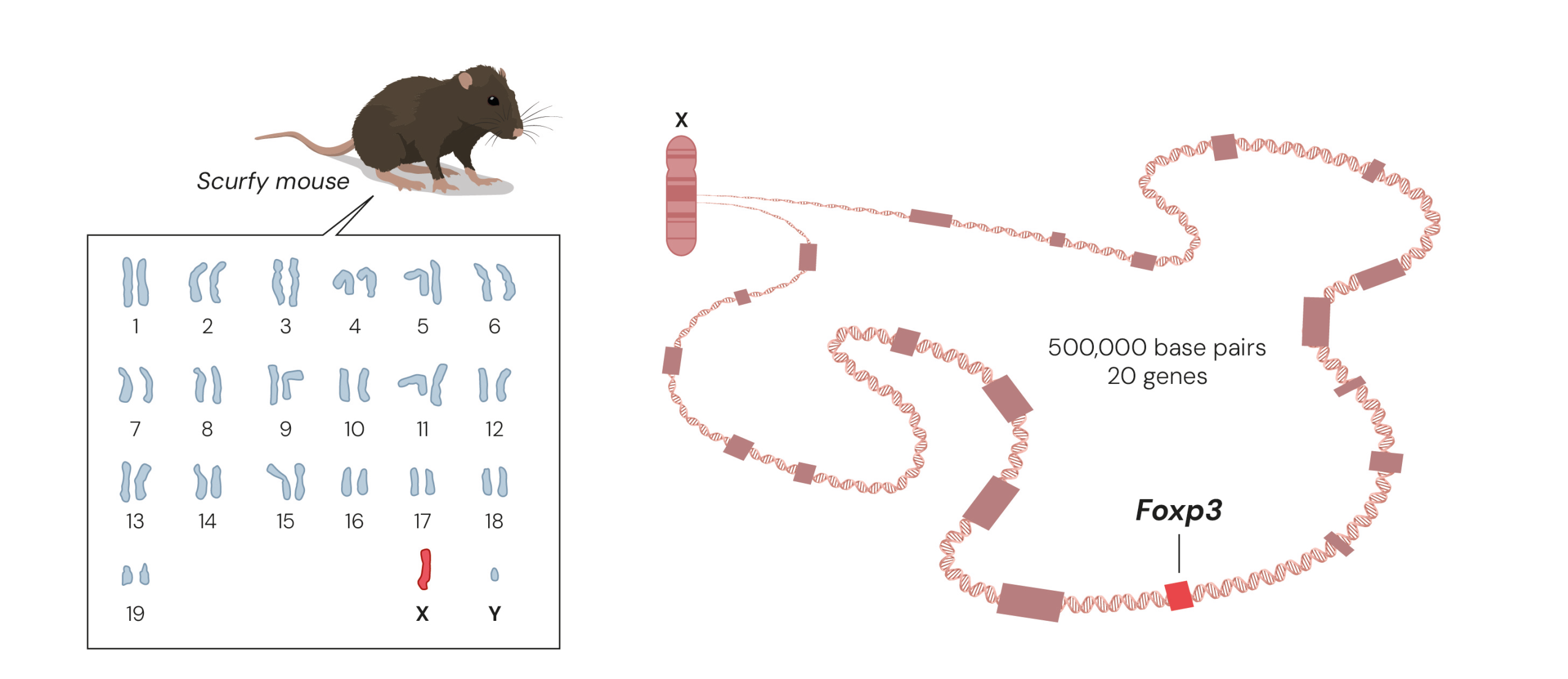

A chance discovery of a spontaneous genetic mutation in mice made almost 80 years ago turned out to be the foundation for Brunkow and her colleague Fred Ramsdell’s Nobel Prize-worthy work. The mutation, known as scurfy, only affects male mice who show several hallmarks of severe autoimmune disease, where the immune system attacks almost every organ in their body. Yet the gene that caused the serious symptoms was unknown. The duo realised that if they could understand the molecular mechanism underlying the mice’s disease, they could gain decisive insights into how autoimmune diseases arise.

Today, it is possible to map a mouse’s entire genome and find a mutated gene in a few days, but in the 1990s it was like looking for a needle in a huge haystack. Mapping had shown that the scurfy mutation must be somewhere in the middle of the X chromosome, so Brunkow and Ramsdell painstakingly mapped that area of the X chromosome, eventually narrowing their search and identifying 20 possible target genes. “It was really a molecular slog to get to that exact mutation, because it was just a very small genetic alteration that results in quite a profound change in the immune system,” Brunkow said.

She bred her own colony of scurfy mice from the same line, taking over and adapting a disused janitor’s closet to provide space to do so. By comparing the genes in healthy mice and scurfy mice they discovered a minor change in a gene that would later be named Foxp3.

During their work, Brunkow and Ramsdell had begun to suspect that a rare autoimmune disease, IPEX, which is also linked to the X chromosome, might be the human variant of the scurfy mice’s disease. In 2001, they revealed that mutations in the Foxp3 gene cause both the human disease called IPEX and the scurfy mice’s ill health.

In 2025, Brunkow and Ramsdell shared the Nobel Prize in Physiology or Medicine with Japanese immunologist Shimon Sakaguchi. Their discoveries launched the field of peripheral tolerance, spurring the development of medical treatments for cancer and autoimmune diseases.

Embracing the unexpected and following the path less travelled has yielded Brunkow a fascinating and inspiring career, combining academic research, biotech startups, consulting, science communication and programme management.

“Discoveries come from places where you never imagined, so you have to keep an open mind and keep your eyes open to different pathways, as you’re journeying through a topic of interest, but also an open mind in terms of career path and where you go in life.”

Mary Brunkow

“I would say I’m a pretty good example of … one who has kind of changed directions and not stayed on one single track. Sometimes doors open off to the side a little bit. There’s nothing wrong with exploring different options.”

Learn more about some of the Nobel Prize-awarded discoveries in the fields of cells, nerves and genes.

Cells, nerves and genes are fundamental to all living organisms. They control life’s processes and development. Often, this is where illness and suffering are caused. Knowledge about cells, nerves and genes is built on centuries of exploring life’s smallest details. New instruments and methods have helped to give us pictures of previously unknown worlds.

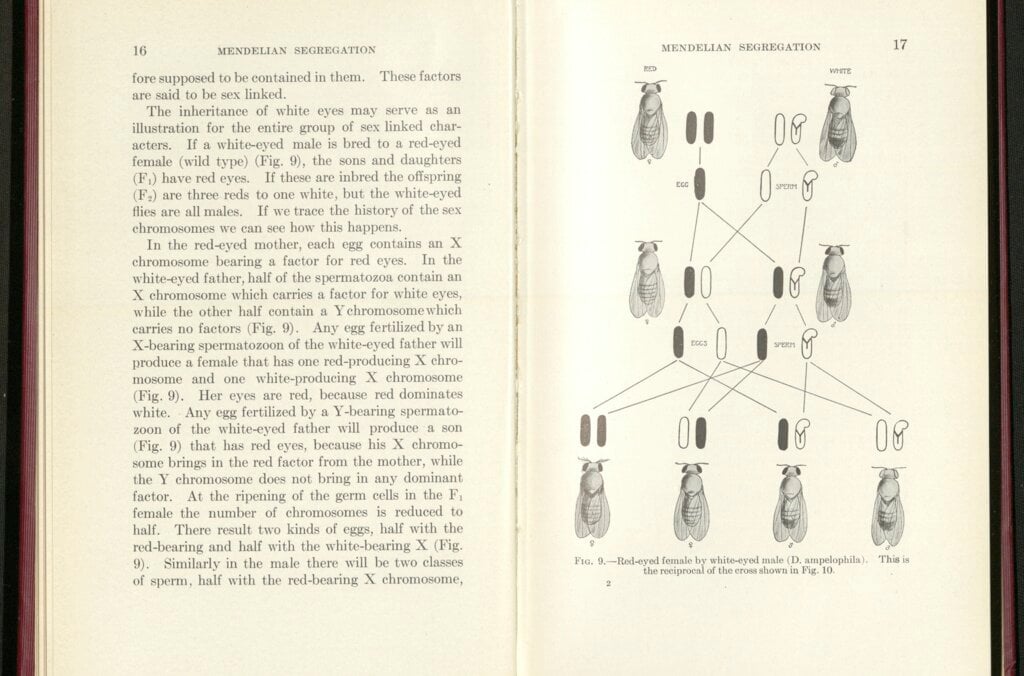

In 1931–1933, Karolinska Institutet (KI) awarded the Nobel Prize in Physiology or Medicine for discoveries in the fields of cells, nerves and genes. Otto Warburg (1931) measured the exchange of energy in cells. Charles Sherrington and Edgar Adrian (1932) studied impulses in the nervous system. Thomas Hunt Morgan (1933) defined modern gene theory by mapping the chromosomes of the fruit fly (Drosophila melanogaster). These laureates all defined and measured processes directly and in real time on the cell, nerve and chromosome level.

Here, you can learn more about their and some of their successors’ Nobel Prize-awarded work.

The content is taken from the exhibition Cells, nerves, genes, a collaboration between the Nobel Prize Museum and Medical History and Heritage at the Karolinska Institutet University Library.

British biochemist Peter Mitchell first published his ideas on the proton motive force in Nature in 1961. The equations here suggest the mitochondrial network. Cellular level art, paint on silk, digitised.

Licence: Attribution-NonCommercial 4.0 International (CC BY-NC 4.0) https://creativecommons.org/licenses/by-nc/4.0/. Credit: Mitchell’s equation I. Odra Noel. Source: Wellcome Collection.

Otto Warburg was awarded the Nobel Prize for his biochemical research into how energy is released in the chemical processes of living cells. In the respiration of cells, nutrients are broken down while oxygen is consumed and energy released. Warburg measured oxygen consumption in the cells and studied the effect of enzymes on these processes, developing ground-breaking experiments and apparatus for this purpose. This made him the most copied biochemist in the first half of the 1900s.

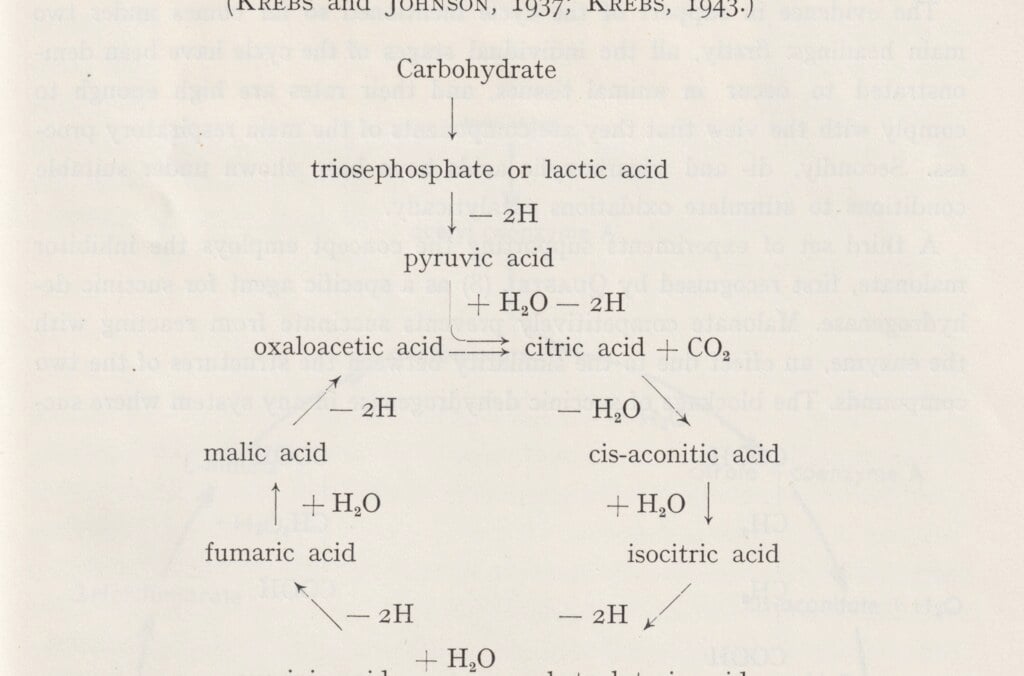

Hans Krebs was awarded the Nobel Prize for his medical discovery of the citric acid cycle, the central cellular metabolic process that breaks down nutrients and releases chemical energy in the form of ATP (adenosine triphosphate). The ATP molecule transfers and provides energy used by cells to power a variety of processes. The citric acid cycle also produces the components for building other molecules. Krebs began his career as a colleague of Otto Warburg in Berlin. After the Nazi take-over in Germany, he moved to the UK and became a professor at Oxford University.

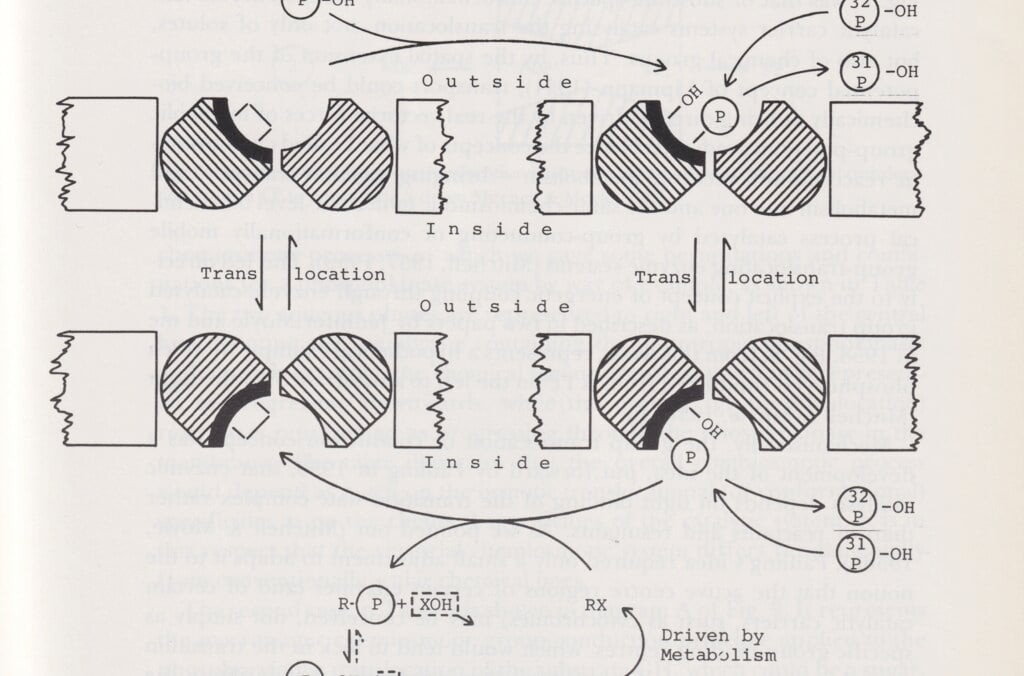

Peter Mitchell explained how ATP molecules transfer energy in cells. This discovery helped to explain fundamental processes such as cell respiration, photosynthesis and bacterial metabolism. His chemiosmotic theory demonstrated that the fundamental mechanism is a process that takes place at the subatomic level in the cell. It begins with a flow of electrons and hydrogen ions through the mitochondria of the cell membrane, known as the “powerhouses of the cell.” The process is controlled by the interaction of several enzymes.

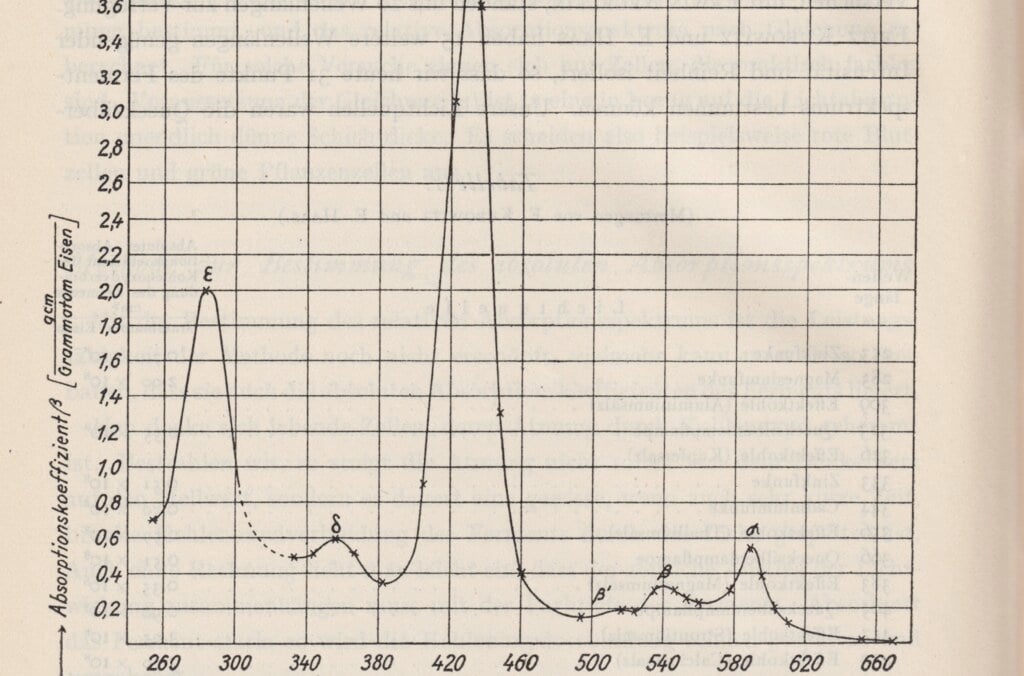

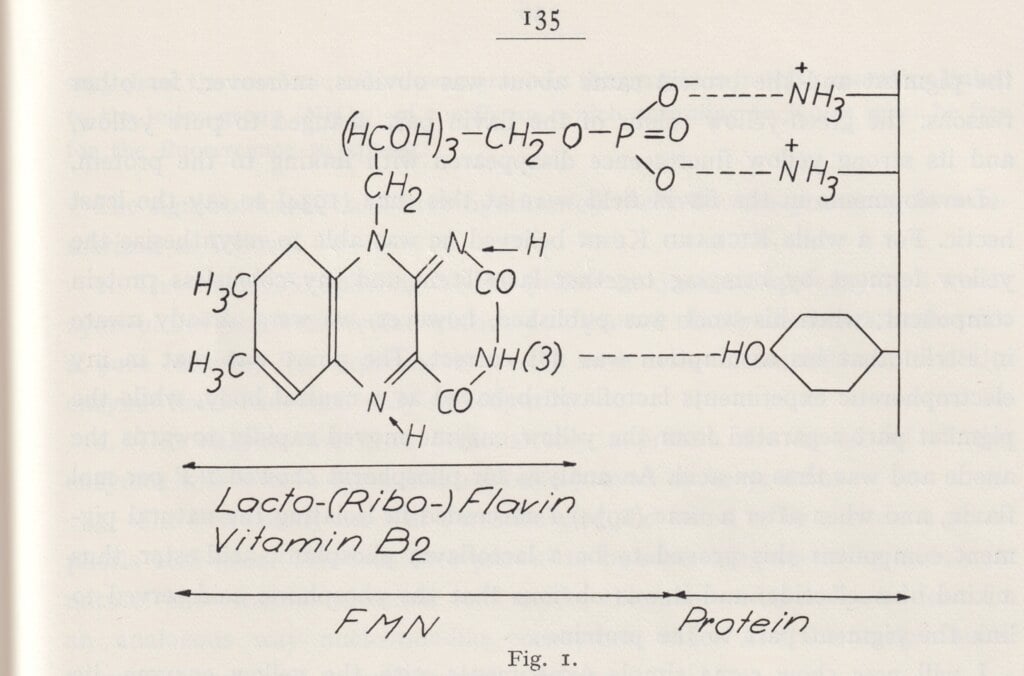

Hugo Theorell showed how oxidative enzymes affect cell respiration. His research laid the foundation for the modern understanding of how cells produce energy from oxygen and nutrients. Theorell made his discoveries in 1935 at Otto Warburg’s laboratory in Berlin. When the Nobel Institute for Medicine was founded at KI, Theorell was offered a position in Sweden to return to.

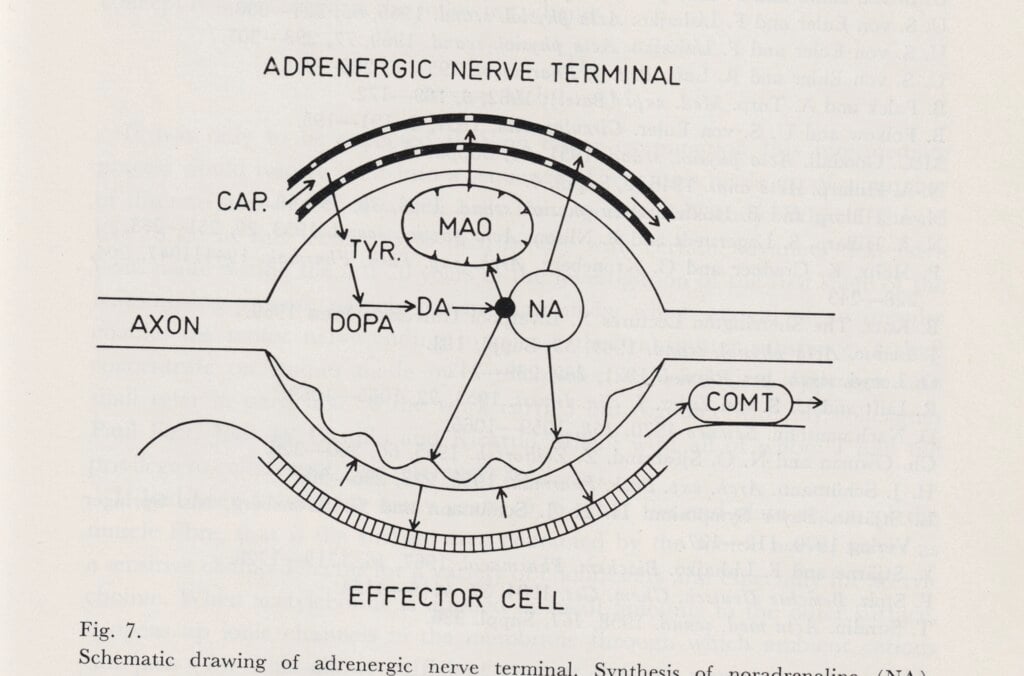

Unlike most prominent Swedish physiologists who focused on the electric signals of the nervous system, Ulf von Euler researched chemical signals at cellular level. In 1940, he found that noradrenaline is a signal substance in the nervous system, and was awarded the medicine prize in 1970 for his discovery. Von Euler was appointed professor of physiology in 1937 at the KI, where he collaborated mainly with Hugo Theorell’s department of biochemistry at the Medical Nobel Institute.

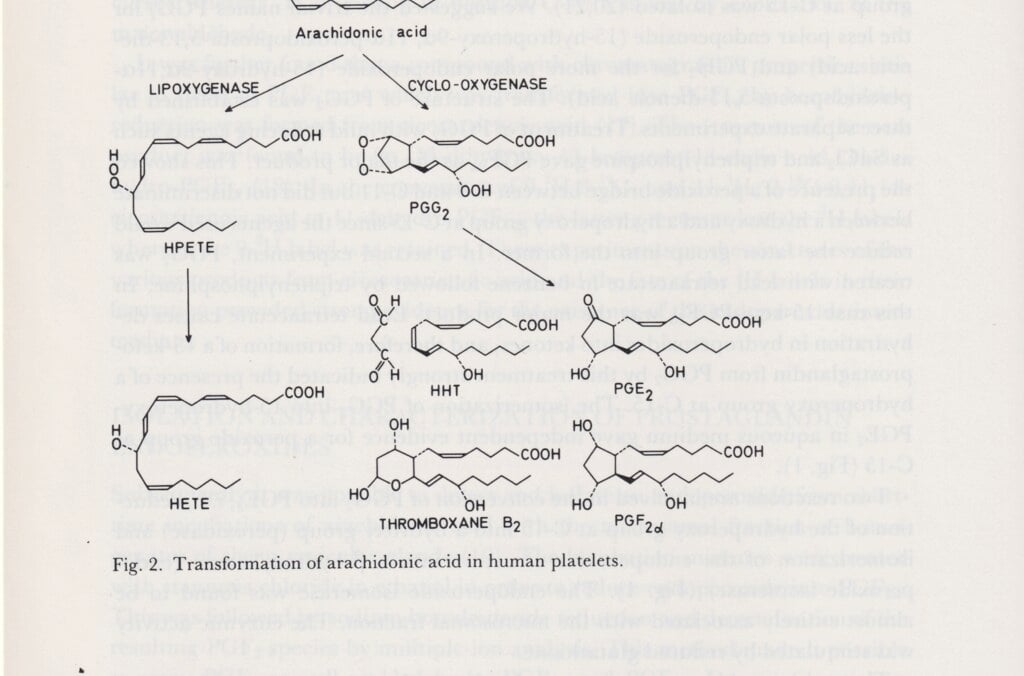

Sune Bergström, professor of chemistry at the KI, worked with Bengt Samuelsson and Jan Sjövall to explore prostaglandin. This is a hormone-like substance made of fatty acids. It affects both blood pressure, menstruation and inflammatory processes in the body. As a pharmaceutical, it can induce labour, but it can also be used for medical abortion. Prostaglandins have been vital to the development of medications in a variety of fields, such as the treatment of blood clots, inflammations and allergies.

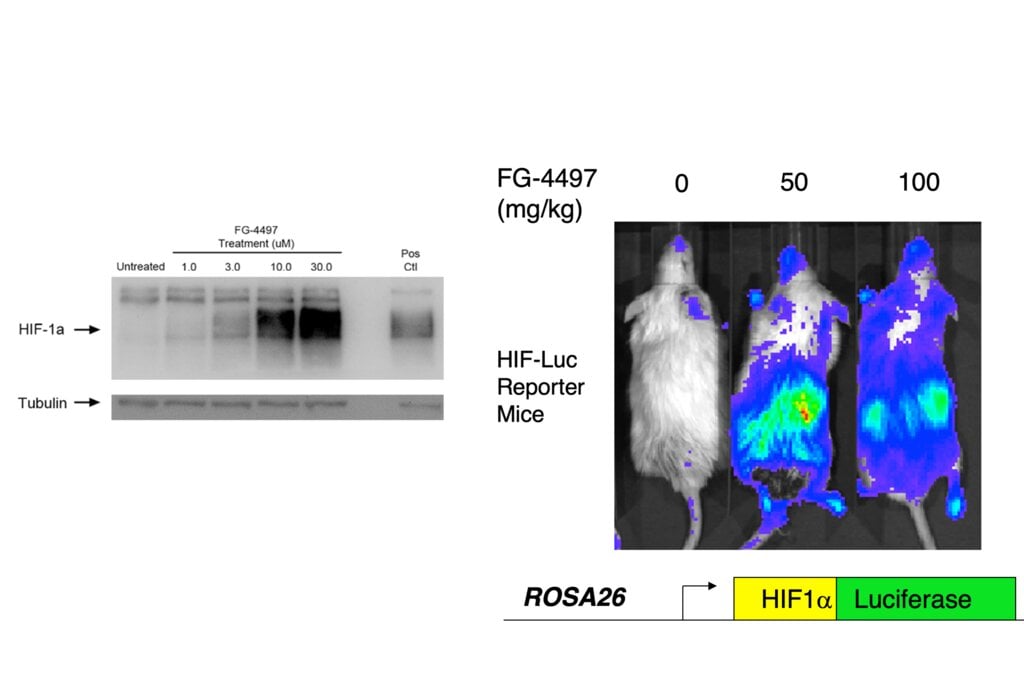

William Kaelin, Peter Ratcliffe and Gregg Semenza discovered how cells can detect and adapt to the oxygen supply. In the 1990s, they identified a molecular machinery that regulates the activity of genes in response to variations in oxygen levels. The discovery may lead to new treatments for anaemia, cancer and other conditions.

In the early 20th century Spanish scientist Santiago Ramón y Cajal known as 'Cajal' drew the first observed neurons as black shapes. The artist paints them here full of colour.

Licence: Attribution-NonCommercial 4.0 International (CC BY-NC 4.0) https://creativecommons.org/licenses/by-nc/4.0/. Credit: Cajal Neurons. Odra Noel. Source: Wellcome Collection.

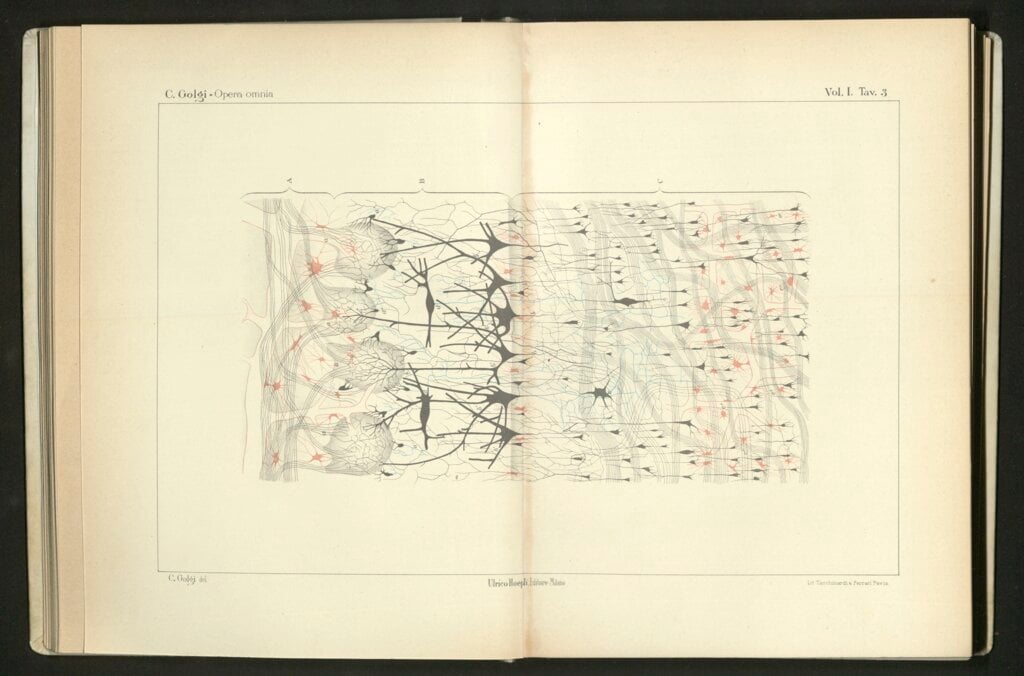

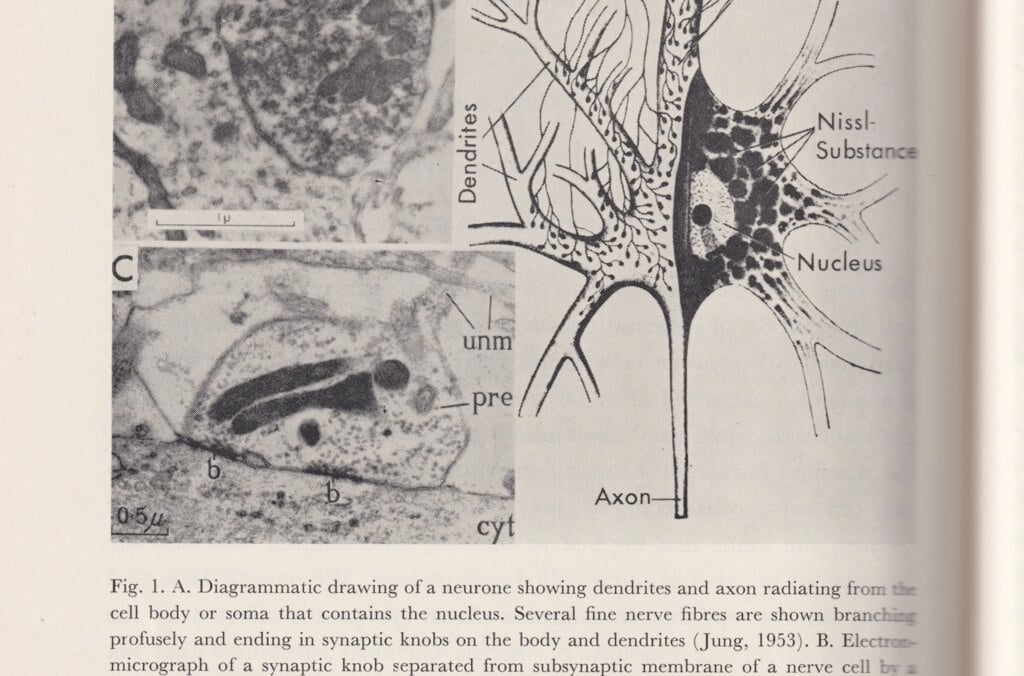

In the 1870s, Camillo Golgi came up with a method to dye cells, which made it possible to see cell details under a microscope. Both Golgi and Santiago Ramón y Cajal used and refined the method when mapping the anatomy and structure of the nervous system. Cajal contributed to developing the neuron theory: that the brain and nervous system consist of discrete elements, nerve cells or neurons, which are linked through contacts, later named synapses.

In the late 1800s, Charles Sherrington contributed to the theory that the nervous system consists of nerve cells, neurons, and that impulses are transmitted through contacts, synapses, between neurons. In experiments, he studied reflexes by charting the links between muscular movements and electric impulses to the nerves. The ability to examine how the nervous system works took a huge leap in 1925, when Edgar Adrian succeeded in measuring electric impulses in an individual nerve. He found that the individual impulses always have the same strength. Stronger simulation does not make the impulses stronger; instead, they are transmitted more frequently and through more nerve fibres.

John Eccles, Alan Hodgkin and Andrew Huxley delved deeper into the nervous system around 1950, to study how electric signals are transmitted. Hodgkin and Huxley found that a basic mechanism consists of sodium ions and potassium ions migrating in opposite directions through the cell walls, giving rise to electric tension. John Eccles could show that there are variants of synapses that are either firing or inhibiting.

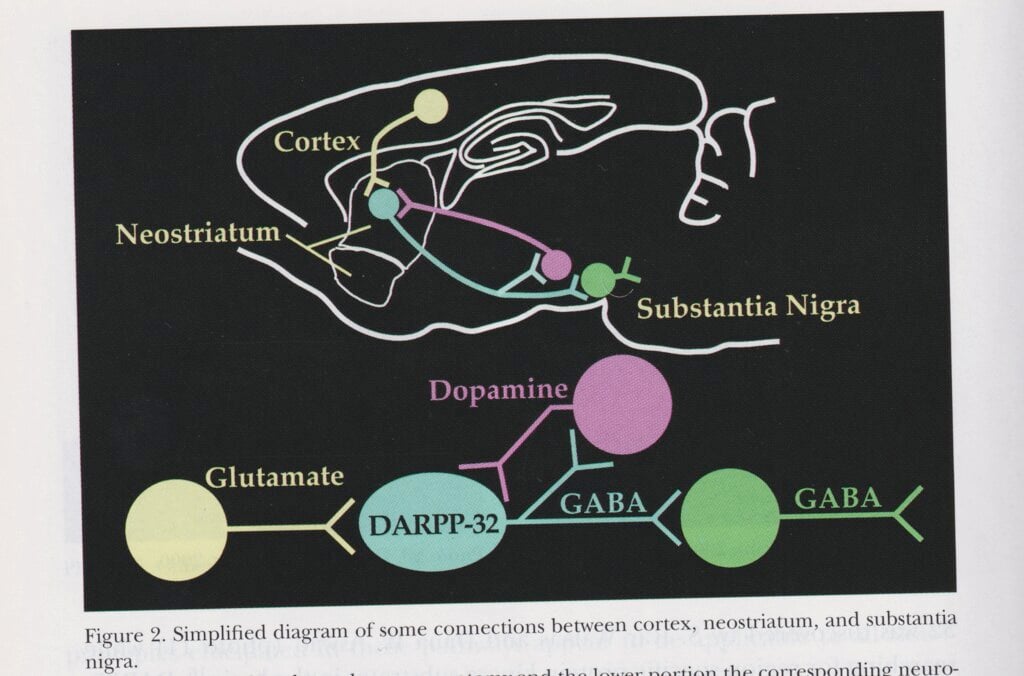

Arvid Carlsson, Paul Greengard and Eric R. Kandel examined how signals are chemically transmitted by various substances between synapses, the contact points between cells in the nervous system. Kandel could also show how these processes shape memories at synapse level. Carlsson’s research on the crucial importance of dopamine to normal movement in organisms led to the introduction of a substance called L-dopa, which the body transforms into dopamine, as a treatment for Parkinson’s disease.

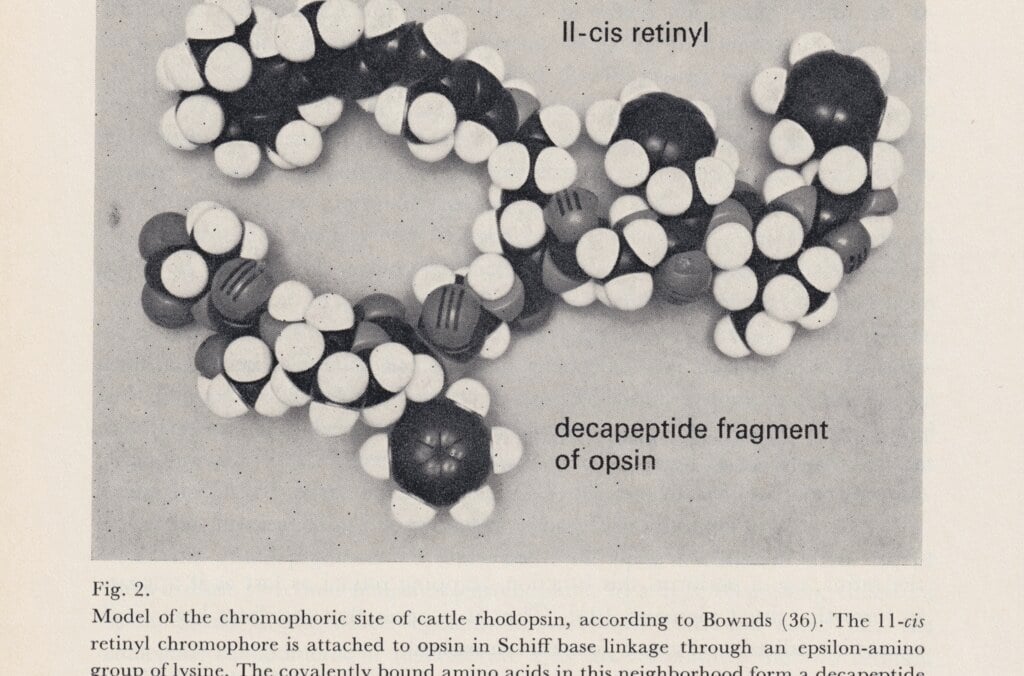

Ragnar Granit, Haldan Keffer Hartline and George Wald made ground-breaking discoveries about vision and the physiology of the eye. Ragnar Granit studied the electrical impulses from the retina cells. He could prove that the cone cells, which enable us to see colours, are of different kinds and respond to light within three spectrum zones. Granit began his career in Helsinki, but transferred to KI in 1946, where he was a professor of neurophysiology at the Medical Nobel Institute. He studied under Charles Sherrington at Oxford.

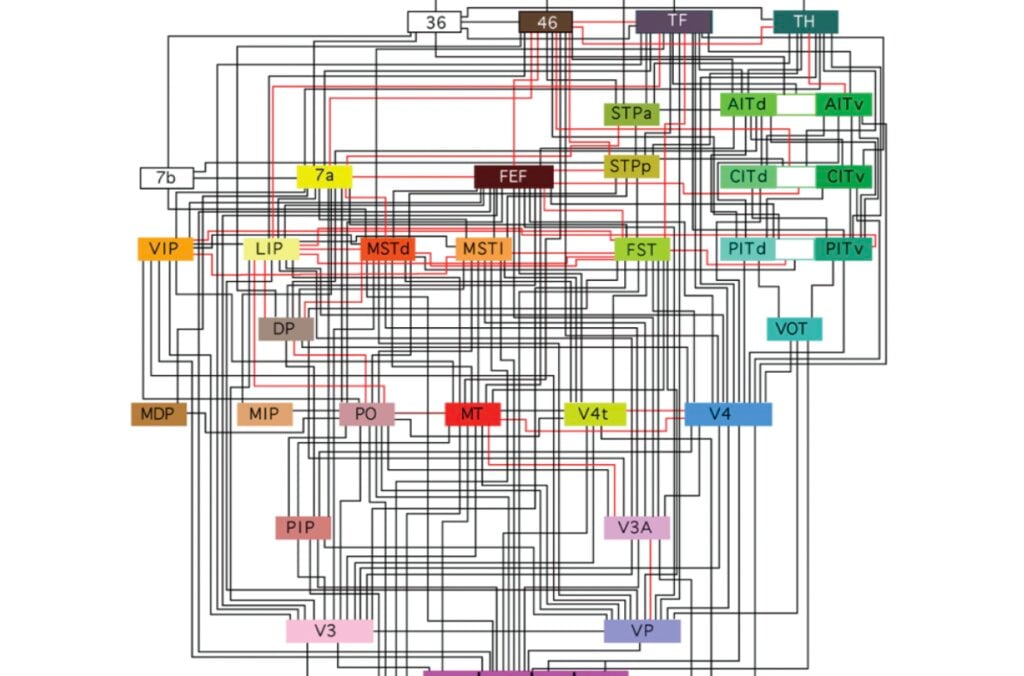

Roger Sperry showed that tasks are divided between the brain’s two halves – the left half handling abstract and analytical thinking, and the right processing spatial cognition and complex sounds. David Hubel and Torsten Wiesel recorded electrical activity from the nerve cells in the visual cortex. They discovered that different nerve cells react to specific visual stimuli, such as lines at a certain angle, movement or direction. Torsten Wiesel studied medicine at KI, where he also began his research before moving to the USA.

In recent decades, neurophysiological research has studied how information is formed in the brain. John O’Keefe could already measure in the 1970s how a rat’s spatial position activated certain cells in its brain. In other words, the brain cells created a sort of map. May-Britt Moser and Edvard Moser later discovered cell types that form a system of coordinates for navigation. When a rat passed points in a hexagonal grid in the space, this activated the corresponding nerve cells in the brain’s coordinate system.

Model of a DNA double helix according to the correct dimensions of the natural molecule.

Licence: Attribution 4.0 International (CC BY 4.0) https://creativecommons.org/licenses/by/4.0/. Credit: Peter Artymiuk. Source: Wellcome Collection.

The fruit fly Drosophila melanogaster became the model organism for Thomas Hunt Morgan’s research. This fly thrives in the laboratory and reproduces prolifically, with new generations in just a couple of weeks. Its comparatively few and large chromosomes are directly visible in a microscope. Morgan studied flies with genetic changes, mutations, such as an extra leg, no antennae, or different wing shapes. He examined how these traits were inherited, and how they are linked to chromosomes. This laid the foundation for a new understanding of the mechanisms of heredity.

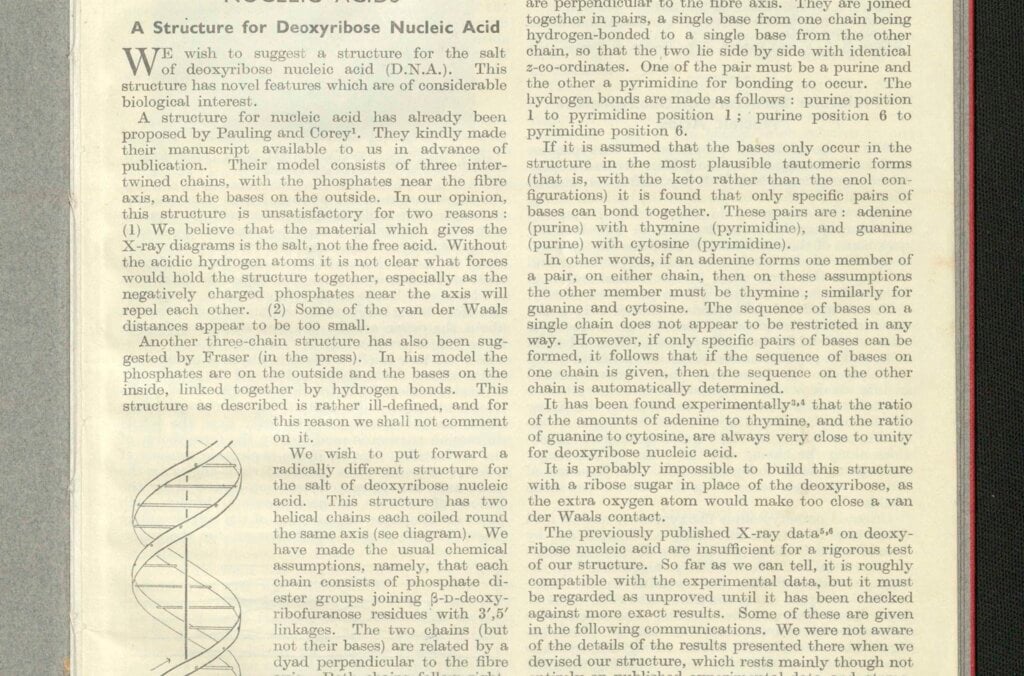

A ground-breaking step in genetic research was made in 1953, when Francis Crick and James Watson managed to determine the structure of the DNA molecule. This molecule consists of a long line of building blocks that form a double helix. Its building blocks are of four kinds, and their sequence serves as a code for genetic information.

This discovery was enabled by research by Rosalind Franklin, who died in 1958. The 1962 medicine prize was awarded to Crick, Watson, and Maurice Wilkins, a colleague of Rosalind Franklin.

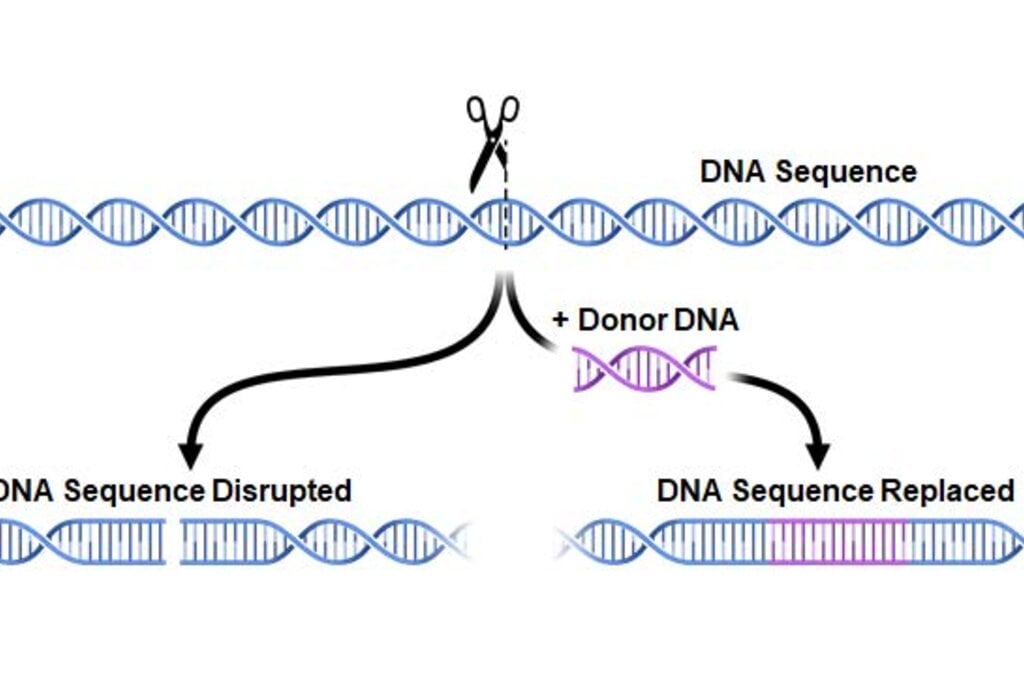

The CRISPR-Cas9 method, also known as “gene scissors,” makes it possible to modify genetic contents. It was developed by Emmanuelle Charpentier and Jennifer Doudna and is a considerably more powerful and precise instrument than previous methods for changing the genetic contents of organisms. This opens up new potential in research and the development of pharmaceuticals and organisms for food production.

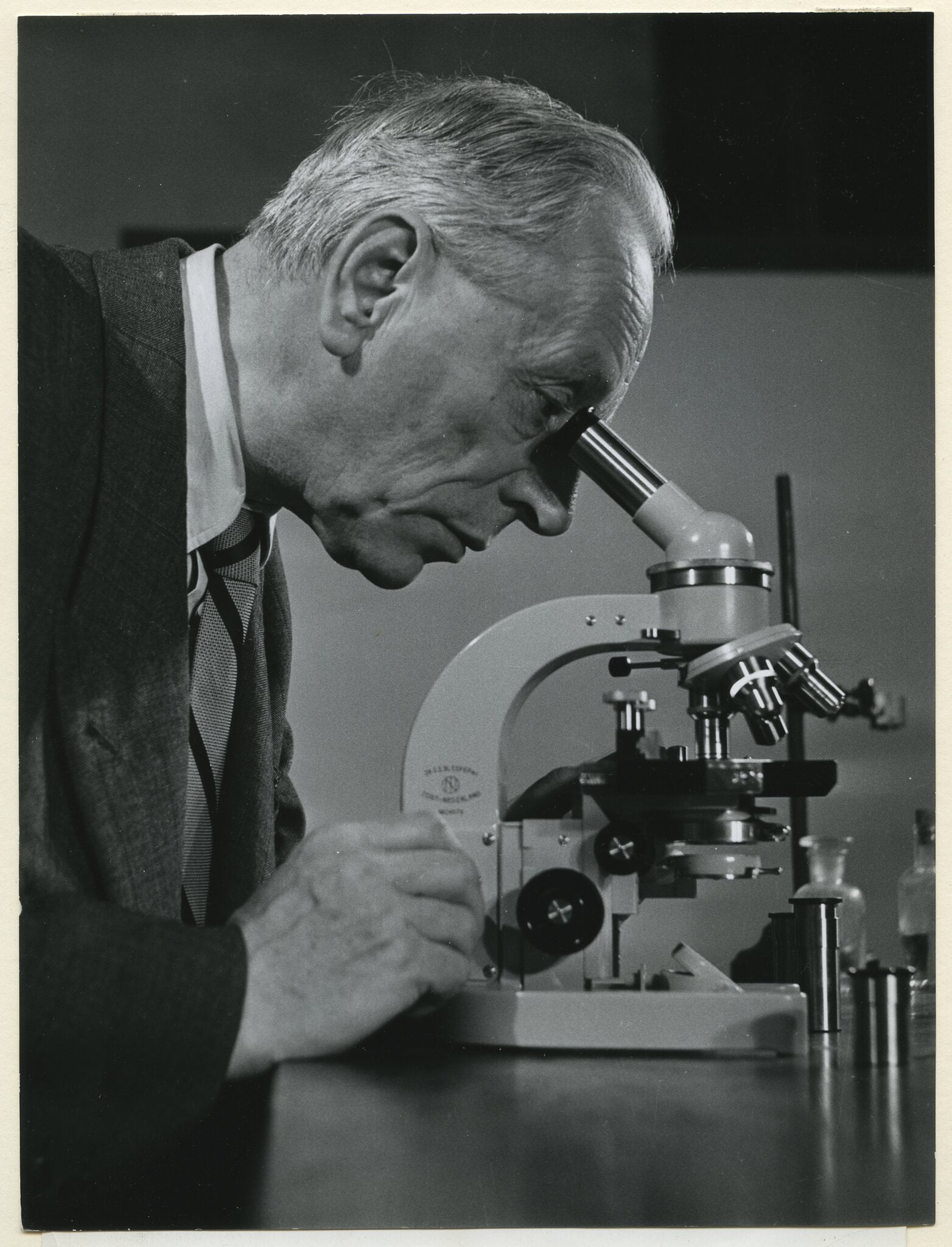

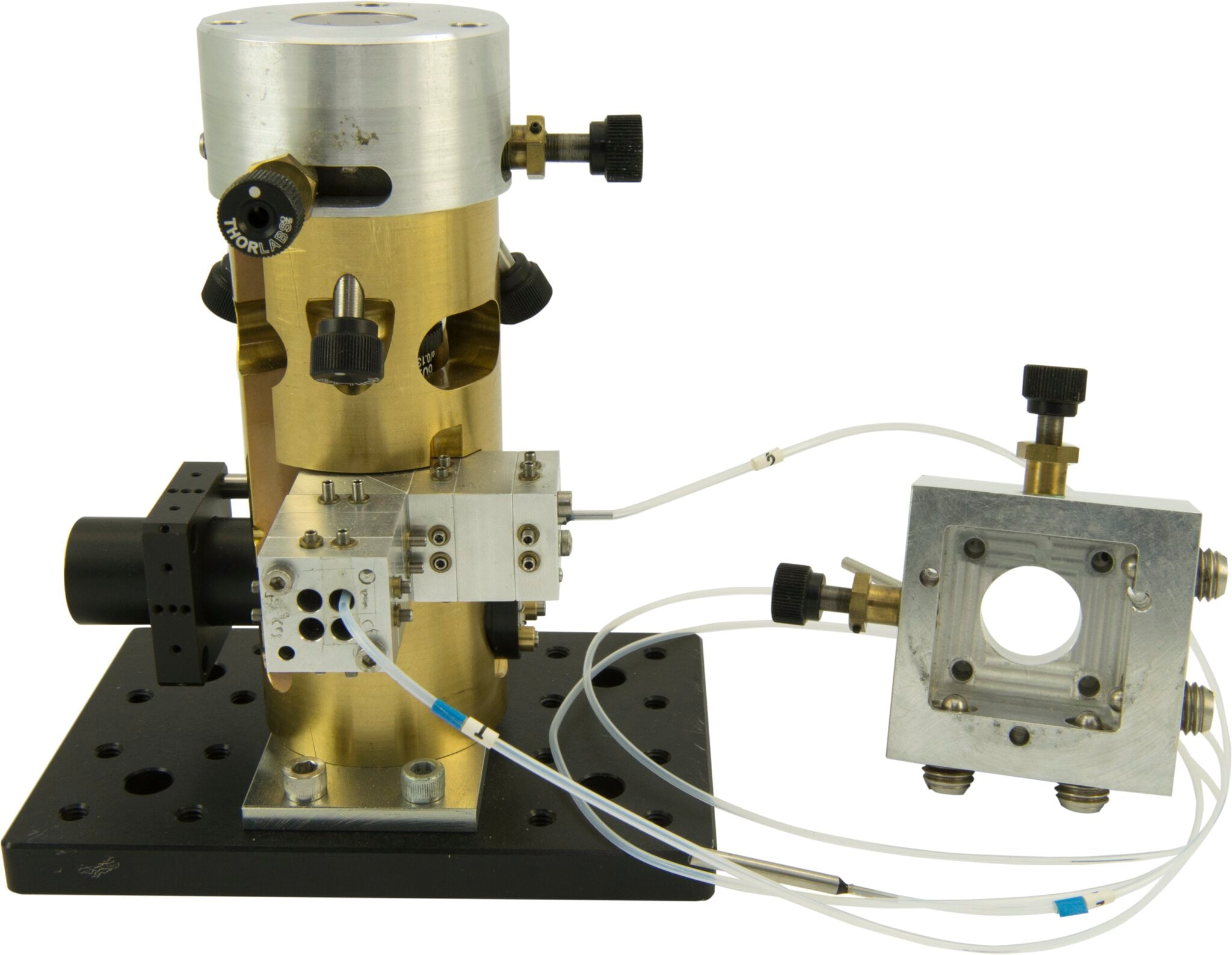

The microscope that medicine laureate Ralph Steinman used daily.

© Nobel Prize Outreach. Photo: Alexander Mahmoud

Eleonora Svanberg, a PhD student in mathematics at the University of Oxford, sits down with 2025 physics laureate John Martinis for a conversation about curiosity, discovery and the future of quantum technology. Martinis reflects on the choices that led him to exploring quantum mechanical behaviour and discusses his groundbreaking work – conducted with co-laureates Michel Devoret and John Clarke – demonstrating quantum phenomena on a macroscopic scale.

Daron Acemoglu, economic sciences laureate 2024, was joined by nine students from all over the world for a conversation on the topic of being a scientist. Acemoglu gave his best advice for finding great collaborators, spoke about the future of AI and shared how he has overcome obstacles during his scientific career.

The small matter of what everything is made from has long fascinated humankind. Microscopes have had a huge impact on our understanding of everything from the composition of materials to the building blocks of life. The work of a number of Nobel Prize laureates have allowed microscopes to evolve.

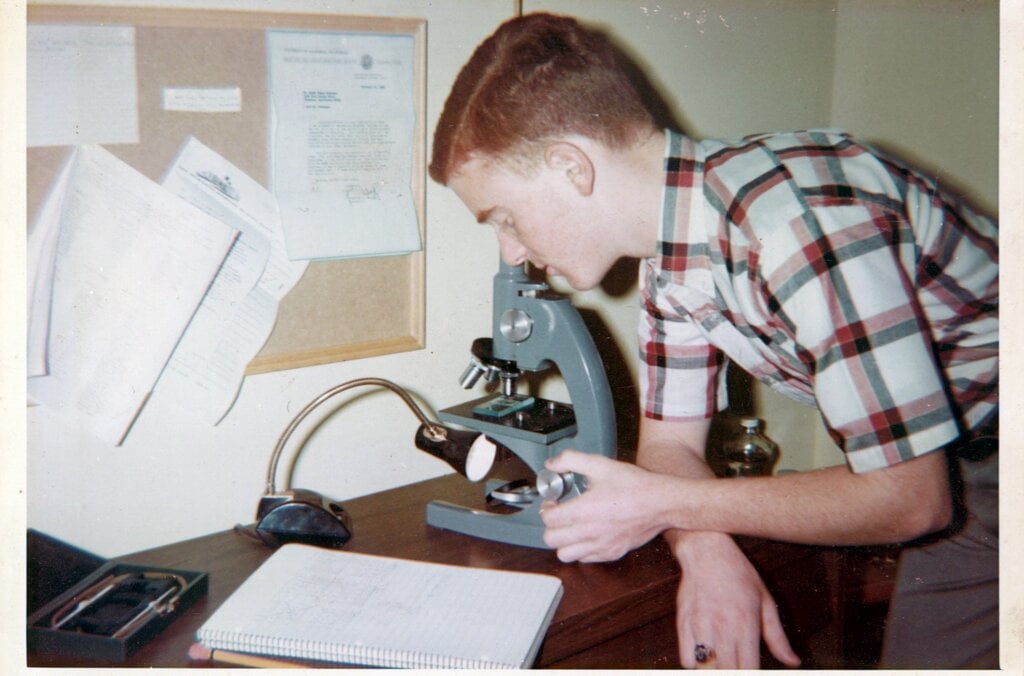

Peering into the plastic eyepiece of his toy microscope to take a closer look at a drop of pond scum, Randy Schekman was transported into a fascinating microbial world. Aged 12, he was determined to save money from odd jobs to buy his first professional microscope. But he couldn’t reach his goal as his mother kept borrowing money from his piggy bank.

“One Saturday I became so upset that, after mowing a neighbour’s lawn, I bicycled to the police station and announced to the desk officer that I wanted to run away from home because my mother took my money and I couldn’t use it to buy a microscope,” he said.

The desperate measures paid off: his parents picked him up from the police station and bought the microscope on their way home. It remained a “treasured possession” throughout Schekman’s school years.

The microscope’s ability to be an extension of the human eye has enabled scientists to make strides in biology and medicine since its invention in the 16th century. Around one century ago, a scientific breakthrough by Richard Zsigmondy led to the development of the ultramicroscope.

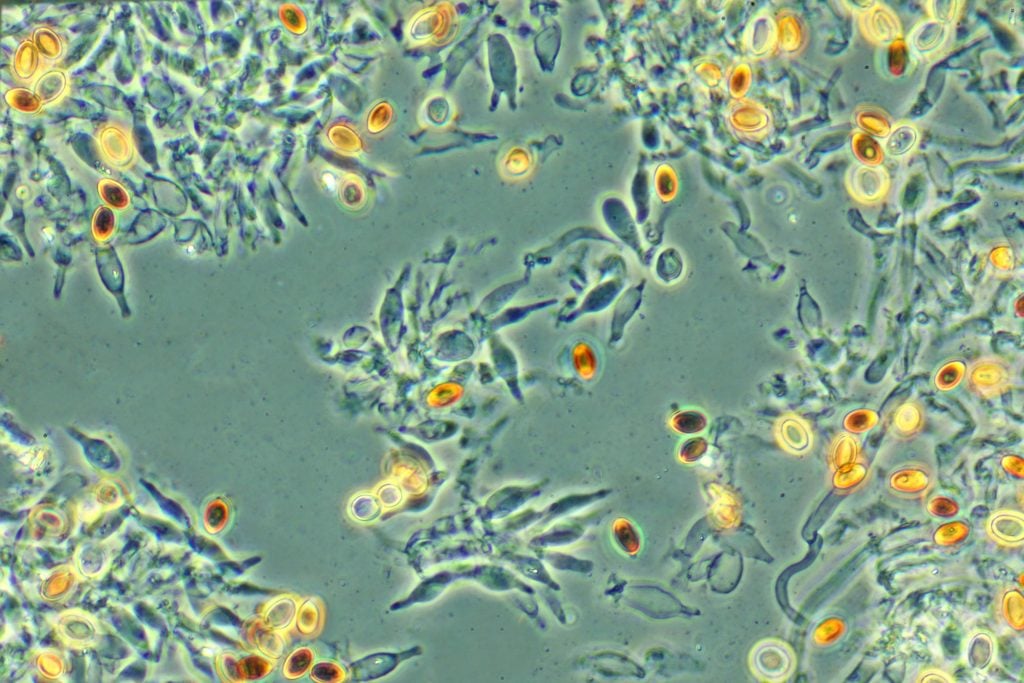

But it was not until Frits Zernike took a fresh look at how refraction gives rise to the image in a microscope that scientists could distinguish transparent specimens such as bacteria and cells without having to cleverly illuminate or stain them.

When light rays pass through transparent materials, such as biological specimens, there is a change in the phase of the light waves – the position of the wave crests in relation to one another – compared to an unimpeded light ray. Our eyes can’t detect this but these phase changes contain important information that can be used to visualise the material they have passed through.

Zernike worked out a way to make this phase change visible, and built the phase contrast microscope in the 1930s. His microscope was capable of enhancing the contrast of unstained, transparent specimens to reveal their inner workings in richer detail, revolutionising biological and medical research.

Despite being told by a major microscope manufacturer that his invention had “little practical value”, Zernike’s work had a massive impact that continues to be felt.

Phase contrast microscopes have enabled strides in biological and medical research to be made, especially in the fields of histology – the study of tissues and organs in the body – and cancer research. They also allow commercial products such as oils, drugs and textiles to be scrutinised.

Ernst Ruska also had to overcome criticism, which began at an early age. Yet, Ruska’s conviction and curiosity paid off when, as a young student in 1933, he developed the first electron microscope. By using a magnetic coil as a lens he harnessed electron beams – instead of light – to obtain images of extremely small objects. The resulting image had a resolution greater than that of light microscopes, which was a breakthrough for science.

His invention allowed the smallest objects, such as cell organelles, viruses, or atoms, to be examined.

Ruska helped commercialise his invention, so that mass-produced electron microscopes rapidly found applications within many areas of science.

The development of the electron microscope opened up a previously hidden world. Ruska was recognised with the Nobel Prize in Physics 1986, which he shared with Gerd Binnig and Heinrich Rohrer who also turned to electrons to design a new microscope.

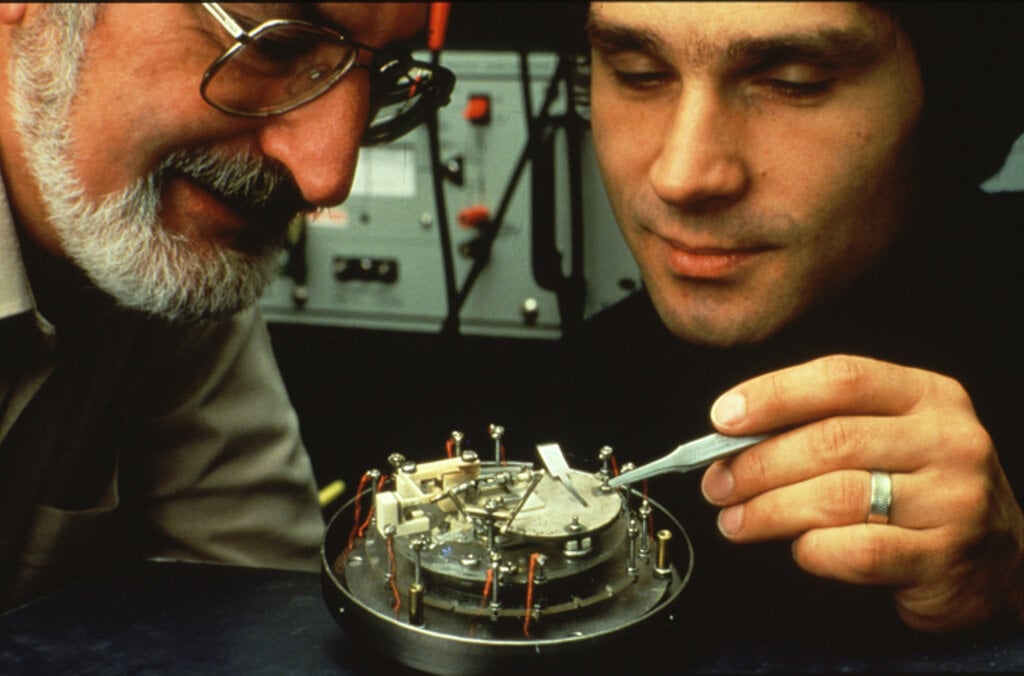

Binnig and Rohrer’s scanning tunnelling microscope (STM) used a single atom-sized stylus to create a topographical map of a surface. They exploited the tunnelling effect, in which a current flows between the stylus and the surface only if they are close enough together, to visualise the surface’s peaks and troughs at the atomic level. Thanks to their advances, crystal surfaces, DNA molecules and viruses could be visualised, opening up new vistas to life around us.

Electron microscopes are incredibly powerful, but they cannot be used to image living cells because the electrons destroy the samples.

For a long time, an alternative – optical microscopy – was held back because scientists presumed that optical microscopes would never obtain a better resolution than half the wavelength of light, and could therefore not be used to visualise tiny objects such viruses and protein molecules in cells.

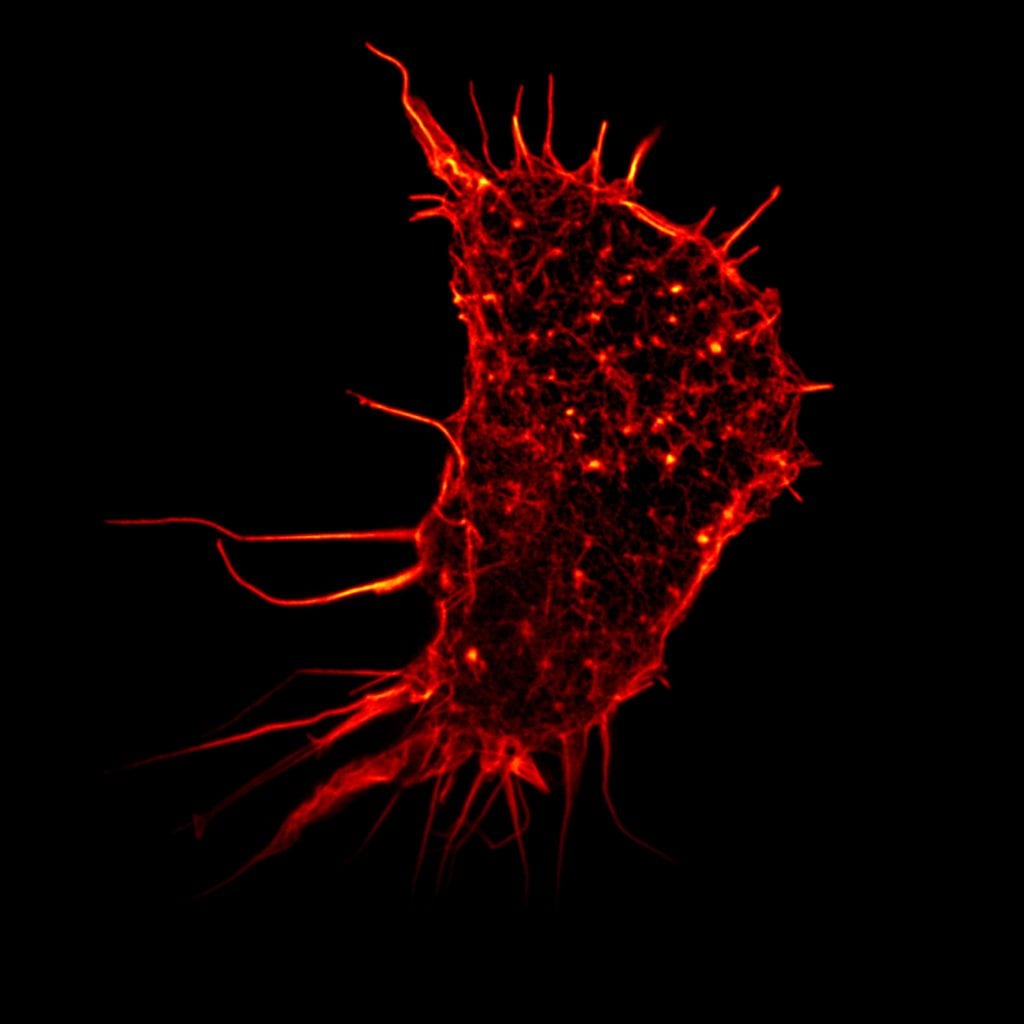

Eric Betzig, Stefan Hell and William Moerner, awarded the Nobel Prize in Chemistry 2014, used fluorescence to bypass this limit. Their work allowed the optical microscope to peer into the nanoworld. Nanoscopy – the ability to see past the optical limit of 200-300nm – revolutionised the field of cell biology. Theoretically, there is no longer any object too small to be studied.

Hell’s technique used fluorescent molecules to image nano-sized parts of a cell. From this he developed the stimulated emission depletion (STED) microscope, which he used at the turn of the millennium to image E. coli bacterium at a resolution never before achieved in an optical microscope.

Betzig and Moerner laid the foundation for a second method; single-molecule microscopy. It relies upon the possibility to turn the fluorescence of individual molecules on and off. Scientists image the same area multiple times, letting just a few interspersed molecules glow each time. Superimposing these images yields a dense super-image resolved at the nanoscale.

Nanoscopy is, among other things, being used to better understand the function of living nerve cells and neuronal circuits. Scientists are working on allowing continuous observation, so they could “film” the workings of “molecular machines” such as proteins, which could be used, for example, to design new drugs.

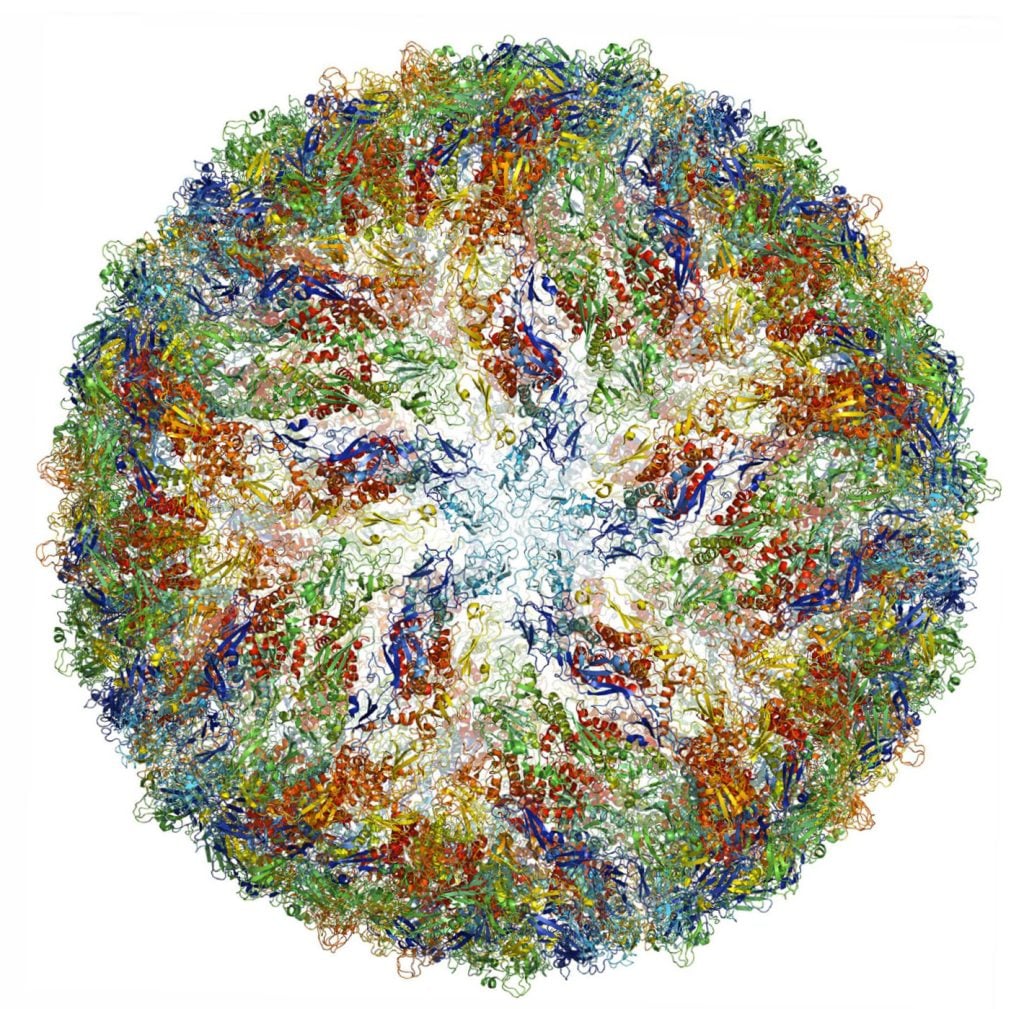

The Nobel Prize in Chemistry 2017 recognised discoveries that contributed to the development of cryo-electron microscopy, which has enabled a new era of biochemistry.

Electron microscopes were only useful for imaging dead objects, because the powerful electron beam destroys biological material. But thanks to the work of Richard Henderson, Joachim Frank and Jacques Dubochet, researchers can now freeze biomolecules mid-movement and portray them at atomic resolution.

Cryo-electron microscopy builds on Henderson and Frank’s research using electron microscopes to visualise biological samples and Dubochet’s method of cooling the water around molecules so rapidly they can be imaged in their ‘natural’ form. Today the technique is being successfully applied to multiple scientific disciplines to reveal molecular insights that would otherwise not be achievable.

In medical research, it is used, for example, to observe the 3D outer shell structures of viruses, uncover the atomic structures of proteins associated with neurodegenerative diseases, and to determine the structure and interactions of proteins that play a significant role in cancer. Better understanding of such mechanisms could lead to the development of more effective treatments.

Numerous Nobel Prize laureates have used microscopes to make beneficial breakthroughs for humankind. The founder of modern bacteriology, Robert Koch, reportedly penned a letter to microscope manufacturer Carl Zeiss that read, “a large part of my success I owe to your excellent microscopes,” having discovered the bacilli that caused tuberculosis and cholera, and receiving the Nobel Prize in Physiology or Medicine in 1905.

Microscopes will doubtlessly help future laureates to experience their eureka moments and brilliant breakthroughs. They are more than just instruments, but gateways to other tiny worlds, that continue to enable revolutionary discoveries.

Learn more about how microwaves revolutionised our ways of communicating and helped us understand the origins of the universe.

The Pope is watching. This had better work. Such thoughts perhaps ran through the mind of Guglielmo Marconi in 1932 as he set up a special antenna in the Vatican gardens while His Holiness Pope Pius XI looked on.

This one used microwaves – radio waves with extra-high frequencies. Marconi also set up a portable microwave communications system attached to a car, connecting the travelling Pope to the Vatican. Some have claimed this was the first mobile phone, albeit a very large one.

Thirteen years earlier, Marconi had shared the Nobel Prize in Physics with Ferdinand Braun for his contributions to wireless telegraphy. The radio age was in full flow. But when Marconi turned to microwaves, he was embracing a part of the radio spectrum with very special properties.

Microwaves can carry vast quantities of information. They can also cook food, or scramble an enemy’s electronics. Microwaves have even helped reveal the very origins of the universe.

Long before Marconi built a microwave telephone for the Pope, someone else had already experimented with similar frequencies.

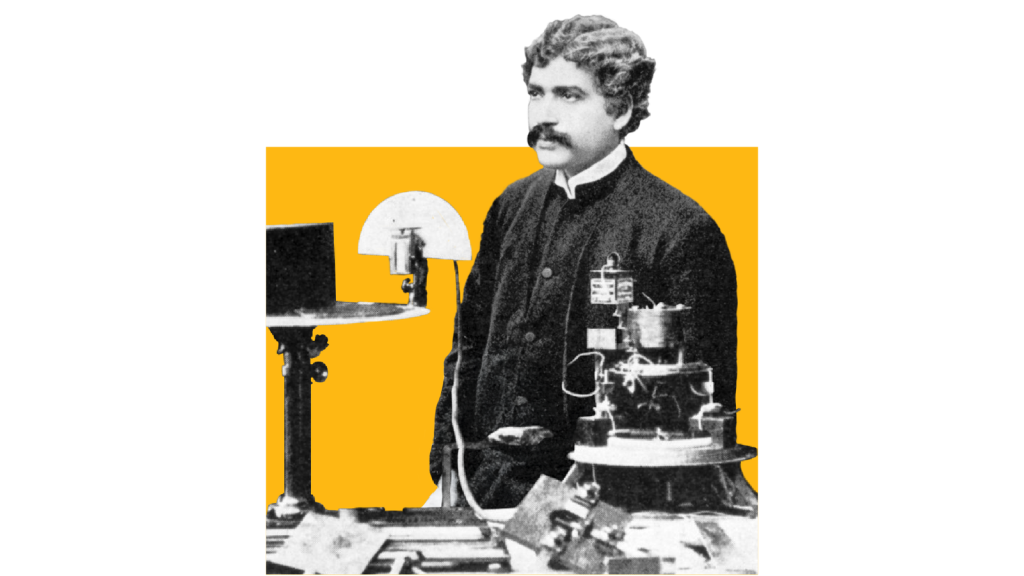

In the late 19th Century, a brilliant Indian scientist called Jagadish Chandra Bose – now, sadly, largely forgotten – developed some of the earliest microwave technology.

This included the very first equipment for generating millimetre waves – the waves used by 5G devices today. In 1895, Bose demonstrated that millimetre waves could ring a bell, and even fire a gun remotely.

Marconi arguably gained some of his stardom thanks to Bose.

On 12 December 1901, using a non-microwave frequency, the Italian inventor carried out the first transatlantic radio transmission. Sitting in a hut atop a Newfoundland cliff, he listened to a swirling deluge of noise in his earphone for many hours – until he heard what he had been waiting for.

Pip-pip-pip.

The Morse code for the letter S. Frantically, he passed the earphone to his colleague and asked, “Can you hear anything?” He could.

In the years since, some have questioned whether the transmission really happened as Marconi described.

However, recent investigations show that it was theoretically possible, even with his early radio equipment.

Among that equipment was a device called a coherer, a simple radio signal detector. And while the records are somewhat murky, it appears this coherer was designed by none other than Bose.

“He came up with fascinating instruments,” says Bose’s biographer, Sudipto Das.

But Bose was, perhaps, too far ahead of his time. For one thing, there were few useful applications for microwaves during the early 1900s that weren’t already feasible with lower frequency radio waves. Bose shifted his focus away from physics to his greater interest, plant physiology, and “almost sank into oblivion,” says Das.

However, World War Two made microwaves important again. Radar allowed militaries to detect enemy aircraft by bouncing radio signals off them. And a microwave device called the cavity magnetron developed in Britain in 1940, turned out to be one of the most powerful and effective radar technologies around.

Small enough to install on aircraft, its fantastic range and precision gave Allied countries an important advantage that helped them win the war.

It was also a microwave-emitting magnetron that inspired Raytheon engineer Percy Spencer to invent microwave ovens in 1945. A peanut bar in his pocket began to melt when he walked past magnetrons in a laboratory. And when he later held up a packet of popcorn, the popcorn popped and “exploded all over the lab,” a Reader’s Digest article later recalled.

This happened because, at certain frequencies, microwaves excite molecules inside food, making them vibrate at the same frequency. The ensuing friction heats things up.

For microwave ovens, the frequency of choice is 2.4 gigahertz (GHz) – the same frequency used by many wi-fi routers. However, routers emit microwaves at much lower power levels than microwave ovens – that’s why you can’t make popcorn just by surfing the web.

Choosing the right frequency for cooking is really important, says Caroline Ross at the Massachusetts Institute of Technology. Microwaves at 2.4 GHz penetrate well inside food, and this frequency also allows for even absorption of the radiation by food molecules.

“When you go higher, like tens of gigahertz, the penetration depth is pretty small, so it gets blocked by almost anything – even water in the air,” she explains.

Microwaves are special partly because of that ability, at certain frequencies, to interact with matter. OK, reheating your dinner leftovers might not seem very dramatic – but what about using microwaves to induce noises in people’s heads?

Military personnel who worked near to large microwave radar installations built during World War II later recalled that they could sense the radar operating. “It was possible to hear the repetition rate of the radar when we were standing close to the antenna horn,” one witness wrote in the 1950s.

James Lin, professor emeritus at the University of Illinois Chicago, heard such stories and attempted to reproduce the effect in his lab during the 1970s.

“I used myself as a guinea pig, basically,” he recalls, as he describes how he set up a microwave antenna and pointed it directly at his head.

Lin has suggested that the microwaves induced pressure waves inside his head, which he sensed as sound. To avoid cooking his brain, he kept the power levels low. “I could hear the pulse,” he says. “The fact I’m still alive… I guess it wasn’t too bad.”

Victims of so-called Havana Syndrome report experiencing strange grating noises, a feeling of pressure building up in their ears, dizziness, nausea and memory loss. Was an enemy directing a microwave beam at these people? While some have dismissed this hypothesis, Lin says it remains the most plausible explanation for the auditory symptoms.

Microwave weapons do exist, though those discussed in public tend to target machines rather than people. The US military has missiles that can zap enemy electronics with microwaves, for instance. Microwaves can even bring down drones.

In contrast, Lin has developed ways of using microwaves to heal – for example, to treat muscle diseases and irregular heartbeats.

For the latter, he says it is possible to insert a tiny microwave-emitting device into the heart, via a catheter, in order to destroy abnormal heart tissue. This technique, now widely used, is less invasive than open heart surgery, he points out: “Just deliver a pulse at high power, a microwave, to burn the tissue.”

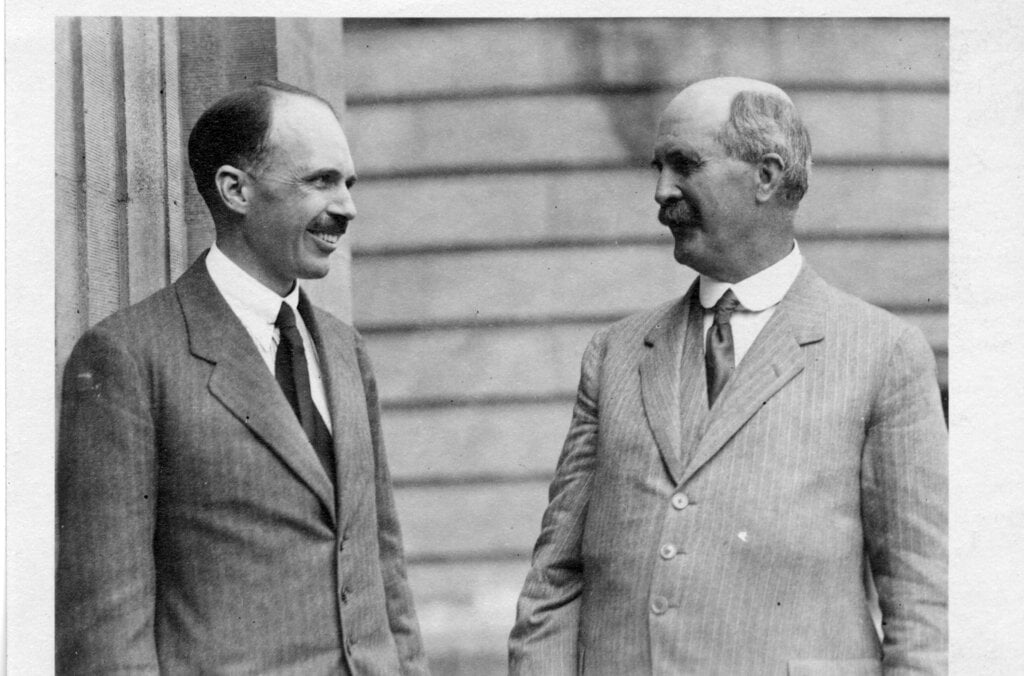

But microwaves don’t just save lives. They have also helped to reveal the origins of the universe. In the early 1960s, radio astronomers Arno Penzias and Robert Woodrow Wilson attempted to use a large, horn-shaped antenna in the US state of New Jersey as a radio telescope. But they kept picking up an irritating hiss or static.

At one point, they thought this was caused by pigeon droppings in the antenna, so they shooed the birds away and cleaned up the mess. However, the birds were not to blame. What Penzias and Wilson were hearing was the sound of the universe itself.

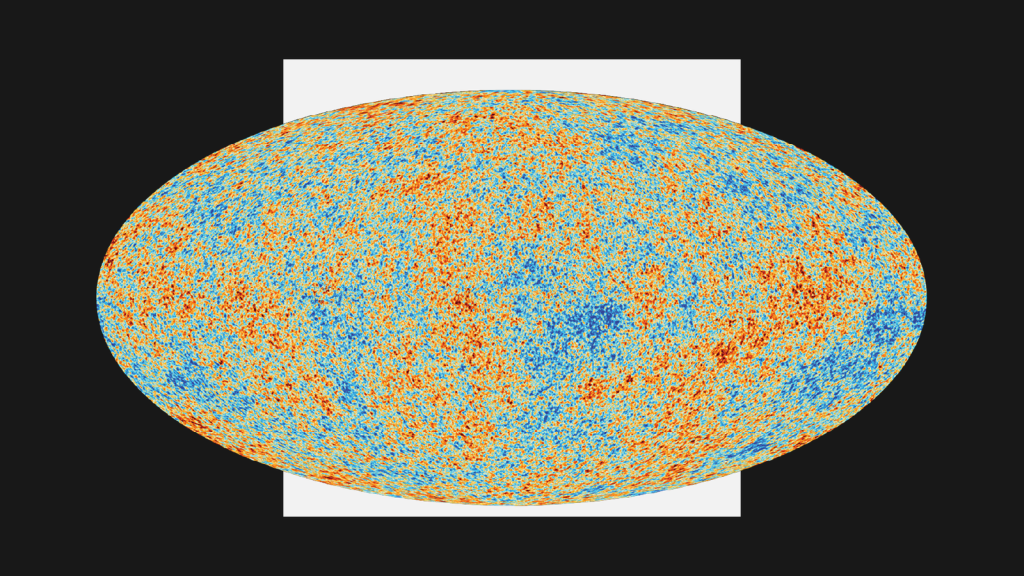

“It’s a snapshot of early time,” says Sean McGee, at the University of Birmingham. Penzias and Wilson had discovered what we now call cosmic microwave background radiation – a signature left over from the Big Bang, when the universe exploded into being roughly 13.8 billion years ago. Penzias and Wilson was awarded half of the 1978 Nobel Prize in Physics for their work.

The residual radiation they detected is present throughout the cosmos. A small proportion of the snowy static on analogue television screens is attributable to it. In other words, until LED screens took over, people would pick up remnants of the Big Bang in their living rooms.

Satellites eventually helped astronomers to map the cosmic microwave background, recording its fluctuations as slight differences in temperature. Those fluctuations appear to have influenced where galaxies formed as the universe expanded.

“We’re all the result of quantum fluctuations in the very early universe that then seeded galaxies,” says McGee.

Today, people use microwaves for any international call that is connected by satellite. A great leap from the car-mounted equipment Marconi installed for the Pope in the 1930s.

It’s rather fitting that many people use microwaves to talk to one another day in, day out – since that is also how the universe has talked to us, helping to confirm our understanding of the greatest story of all time. The story of how everything began.

By Chris Baraniuk, BBC World Service. This content was created as a co-production between Nobel Prize Outreach and the BBC.

Published March 2026

A help in solving crimes and a revealer of history’s secrets: radiocarbon dating is one of the keys that unlocks our world.

There would be heaps of the stuff in sewage. Willard Libby was sure of it.

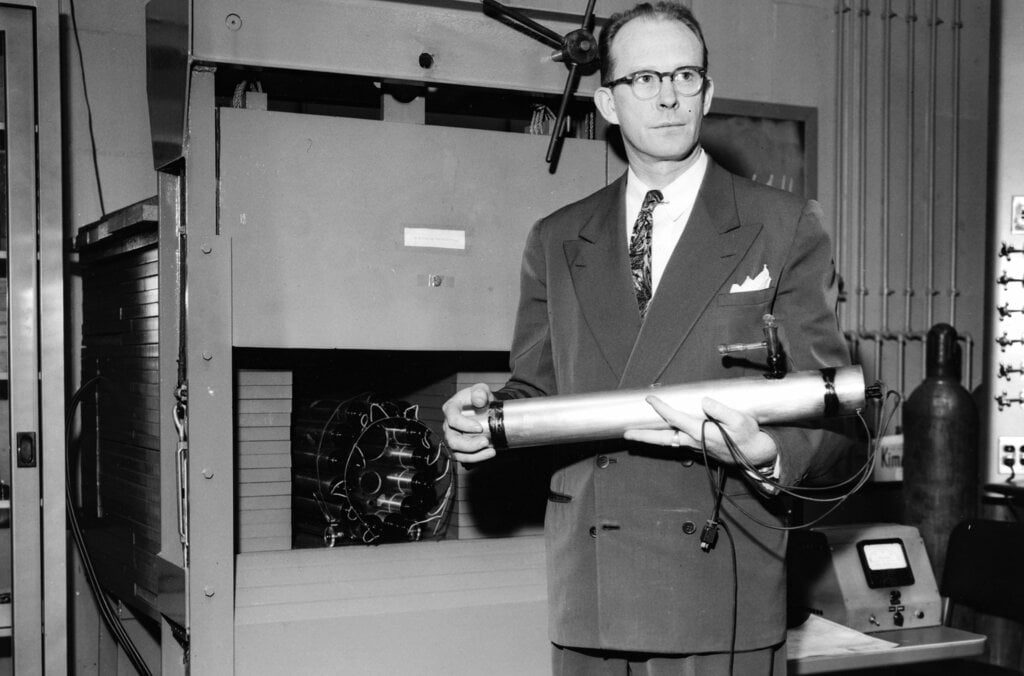

It was the mid-1940s, and the US chemist’s goal was to find a radioactive form of carbon, carbon-14, in nature. He had realised that, if it were there, it would leave a slowly decaying trace in dead plants and animals – so finding out how much was left in their remains would reveal when they died.

But Libby had to prove that carbon-14 existed in the wild in concentrations that matched his estimates. Other scientists had only ever detected carbon-14 after synthesising it in a lab.

Libby reasoned that living things would deposit it in their excrement, which is why he turned to sewage. Sewage produced by the people of Baltimore, to be precise. And he found what he was looking for.

Libby didn’t know it then but the idea that you could use radioactive carbon – radiocarbon – to date things would have all kinds of diverse applications.

But how does carbon-14 come into existence in the first place? Libby understood that it was being produced constantly by cosmic rays striking nitrogen atoms in the Earth’s atmosphere and changing their structure. The resulting carbon-14 atom quickly combines with oxygen to make radioactive carbon dioxide (CO2).

Back on the ground, plants absorb some of that radioactive CO2 in the air as they grow, as do the animals – including humans – that eat them. While a plant or animal is alive, it keeps replenishing its internal store of carbon-14 but, when it dies, that process stops. Because radiocarbon decays at a known rate, measuring how much is left in organic material will tell you the material’s age. A clock that starts ticking the moment something dies.

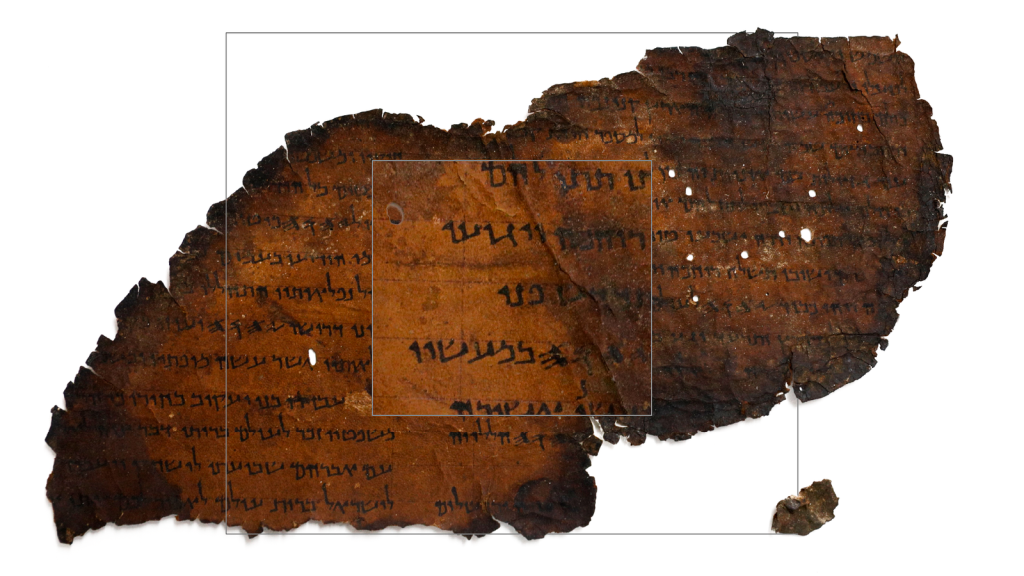

Once Libby confirmed there was carbon-14 in the methane gas from Baltimore sewers, he went on to detect radiocarbon in many different things, allowing him to prove how old they were – from the linen wrappings of the Dead Sea Scrolls to a piece of a ship found in the tomb of Sesostris III, an Egyptian king who lived nearly 4,000 years ago.

“This is a problem where you won’t tell anybody what you’re doing. It’s too crazy,” Libby later said. “You can’t tell anybody cosmic rays can write down human history. You can’t tell them that. No way. So we kept it secret.”

But once he had proved it worked, he let the world know. And, in 1960, Libby was awarded the Nobel Prize in Chemistry.

His technique works on organic material that is up to 50,000 years old. Older than that, and there is too little carbon-14 left. Carbon-14’s gradual decay is what makes radiocarbon dating possible – but that also means you can only go back so far.

Radiocarbon dating is now central to our understanding of history.

“In terms of putting things in order, in terms of being able to compare between different regions in particular, and understand that pace of change, it has been really important,” explains Rachel Wood, who works in one of the world’s most distinguished radiocarbon dating labs, the Oxford Radiocarbon Accelerator Unit.

She and her colleagues date materials including human bones, charcoal, shells, seeds, hair, cotton, parchment and ceramics, but also stranger substances. “We do the odd really unusual thing, like fossilised bat urine,” she says.

The lab uses a device called an accelerator mass spectrometer to directly quantify the carbon-14 atoms in a sample – unlike Libby, who was only able to measure the radiation emitted and thereby infer how much carbon-14 a sample contained. The accelerator can also date tiny samples, in some cases a single milligram, whereas Libby needed much more material.

Removing carbon-containing contaminants can take weeks, but once done the accelerator readily spits out a sample’s estimated age.

“It’s really exciting to be able to see the results immediately.”

Rachel Wood, Oxford Radiocarbon Accelerator Unit.

Radiocarbon dating has settled some long-standing arguments. Take the human skeleton discovered by theologian and geologist William Buckland in Wales in 1823. Buckland insisted it was no more than 2,000 years old, and for more than a century, no-one could prove he was wrong. Radiocarbon dating eventually showed it was actually between 33,000 and 34,000 years old – the oldest known buried human remains in the UK.

More recent human remains have also revealed their secrets thanks to this technology.

In 1975, a 13-year-old girl called Laura Ann O’Malley was reported missing in New York. Remains found in a California riverbed in the 1990s were thought to have originated in a historic grave until radiocarbon dating earlier this year showed they belonged to someone born between 1964 and 1967, who most likely died between 1977 and 1984. This fitted the timeline of O’Malley’s disappearance, and DNA analysis confirmed the remains were hers.

Forensic analyses often rely on the “bomb pulse” method of radiocarbon dating, which is possible due to the hundreds of atmospheric nuclear weapons tests that occurred during the 1950s and 1960s.

The blasts sent vast quantities of additional carbon-14 into the air, but these artificially high levels have been falling ever since. And so, by comparing carbon-14 measurements with that downward-sloping curve, it is possible to date materials from the mid-20th Century onwards very precisely – to within a year or so, in some cases.

“I don’t know of any other technique that comes close to that,” says Sam Wasser at the University of Washington. “It’s extraordinarily useful.”

Wasser has analysed radiocarbon dating results from ivory samples as part of efforts to crack down on the illegal wildlife trade. The data can show whether the elephants died before or after the 1989 ban on ivory sales, whatever traffickers may claim.

One man jailed on this evidence is Edouodji Emile N’Bouke, convicted in Togo in 2014. While DNA tests uncovered the geographic origin of the ivory he trafficked, radiocarbon dating showed exactly when the elephants were poached. These two strands of evidence were “the smoking gun critical to bringing N’Bouke to justice,” the US State Department later said.

The same techniques have exposed artworks as forgeries. Take the painting of a village scene that one forger claimed was made in 1866. Radiocarbon dating confirmed that it had actually been painted, and artificially aged, during the 1980s.

This research has informed reports by the Intergovernmental Panel on Climate Change (IPCC), which in 2007 was awarded the Nobel Peace Prize – along with former US Vice President Al Gore – for its work disseminating information about climate change.

“It’s also very useful for people who want to use climate models to predict what the climate may potentially be like in the future,” says Tim Heaton at the University of Leeds. Scientists can use radiocarbon records to establish how Earth’s climate changed over time, and check climate models against those results, validating the models’ accuracy.

But another clock is ticking. Fossil fuels contain copious quantities of carbon but no carbon-14 – the organisms that became coal, natural gas and oil, died so long ago that the carbon-14 they once contained has long since decayed. That means fossil fuel emissions are diluting the carbon-14 in Earth’s atmosphere today, which has a direct effect on how much radiocarbon ends up in living things.

Heather Graven at Imperial College London says that, in the worst-case scenario of extremely high emissions during the next century or so, the accuracy of radiocarbon dating could crumble.

“Something that’s freshly produced will have the same [radiocarbon] composition as something that’s maybe 2,000 years old,” she says. Radiocarbon dating wouldn’t be able to tell the two apart.

She spent many years working to heighten the precision of radiocarbon dating, by making painstaking measurements of the radiocarbon found in tree rings, for example, to reveal variations in atmospheric levels of carbon-14 across millennia.

Extremely precise curves of radiocarbon levels are now available dating back 14,000 years or so. But fossil fuel emissions may eventually bring this era of incredible precision to an end.

By Chris Baraniuk, BBC World Service. This content was created as a co-production between Nobel Prize Outreach and the BBC.

Published February 2026

The Nobel Prize, National Geographic Documentary Films and Academy Award-winning filmmaker Orlando von Einsiedel have collaborated on a 5-part short documentary series, celebrating the ongoing impact and influence of Nobel Peace Prize laureates around the world. Watch the films here.

In 1998, Makur Diet lost his leg to a bullet in war-damaged South Sudan. Despairing for his future, Makur was close to committing suicide, until he was given a prosthetic leg. Makur realised that he now had a chance to do something good in the world and decided to devote his life to helping other amputees. At an International Committee of the Red Cross (ICRC) centre in South Sudan, Makur makes prosthetic legs and gives hope to other persons who have lost a limb. He is helping to rebuild his country, one leg at a time.

Makur works at an International Committee of the Red Cross centre in South Sudan. The ICRC have received the Nobel Peace Prize in 1917, 1944 and 1963.

In the chaos of the world’s largest refugee camp, Kamal Hussein is a beacon of hope. From his small ramshackle hut, and armed only with a microphone, he has taken it upon himself to try and reunite the thousands of Rohingya families who have been torn apart by violence and ethnic cleansing in Myanmar. However, in finding lost family members and bringing them back together, he is not just helping them. He is also finding peace for himself.

Kamal’s work is funded by the United Nations High Commissioner for Refugees, recipient of the Nobel Peace Prize in 1954 and 1981.

In an area of Iraq destroyed by ISIS, Hana Khider leads an all-female team of Yazidi deminers in their attempts to clear the land of mines. Their job involves painstakingly searching for booby traps in bombed out buildings and fields, where one wrong move means certain death. Even though the devastations caused by ISIS still are evident and the local people are suffering, they are trying to forget the past and remain hopeful.

Hana works for the Mines Advisory Group, an organisation who are part of the International Campaign to Ban Landmines, a coalition awarded the Nobel Peace Prize in 1997.

How would natural habitats develop without human interference? In this documentary we follow an international team of scientists and explorers on an extraordinary mission in Mozambique to reach a forest that no human has set foot in. The team aims to collect data from the forest to help our understanding of how climate change is affecting our planet. But the forest sits atop a mountain, and to reach it, the team must first climb a sheer 100m wall of rock.

The scientist’s work is based on research conducted by the Intergovernmental Panel on Climate Change, recipient of the Nobel Peace Prize in 2007.

Here you get to follow the two South African musicians; Tsepo Pooe, who grew up in Soweto Township; and Lize Schaap, who grew up in wealthy Pretoria. They are both part of a very special and unique orchestra, the Miagi Orchestra. Inspired by the legacy of 1993 Nobel Peace Prize laureate Nelson Mandela, the orchestra aims to help the nation overcome decades of violence, conflict and division through the power of music.

The story behind the 100-year-old discovery that continues to render Nobel Prizes.

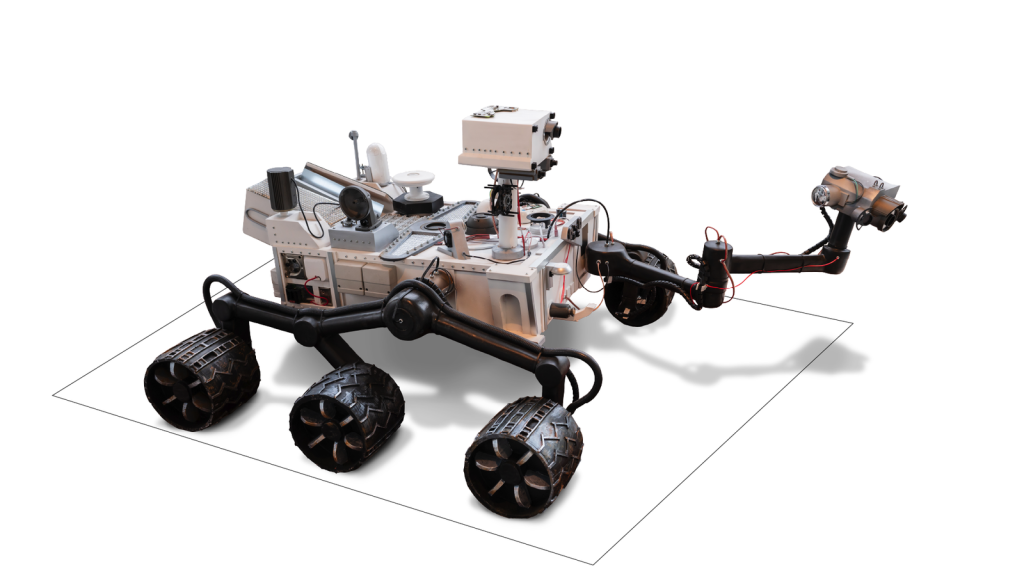

Alone in the Martian desert, a robot was looking for answers. In 2012, Nasa’s Curiosity rover scooped up a small pile of sand, ingested it, and blasted it with X-rays. The intrepid bot was going to find out what that sand was made of, which could in turn reveal information about the historical presence of water on Mars – because any water in this dusty, red plain was long gone.

Nearly a century earlier, in 1915, William and Lawrence Bragg – a father and son team – had been awarded the Nobel Prize in Physics for their work on X-ray crystallography, a technique that makes it possible to determine the atomic and molecular structures of crystals by studying how X-rays diffract, or deviate, when they interact with them.

Many materials, from tiny proteins to metals, can form crystals, and X-ray crystallography became the gold standard for revealing how various forms of matter are put together.

Back on Earth, Michael Velbel at Michigan State University was waiting eagerly for data from Curiosity on Mars. This was the first time X-ray crystallography had ever been done on another planet.

“I was shadowing the mission all along,” recalls Velbel.

Curiosity’s analyses revealed details of the water content of minerals on Mars, which have given credence to – though not proved – the hypothesis that the planet had large bodies of water just a few hundred thousand years ago.

“We can finally get a grasp on that,” says Velbel.

Knowing what things are made of lets us do some amazing things. Analysis of atomic and molecular structures have helped scientists design drugs, unravel the secrets of DNA, and even make better batteries.

You can tell X-ray crystallography is important because it has played a role in numerous Nobel Prizes – some put the tally at more than two dozen. And yet few people know just how awesome a technique it is.

“A lot of people call me ‘Chrystal the Crystallographer’ – or ‘C-squared’” jokes Chrystal Starbird at the University of North Carolina at Chapel Hill. She remembers the first time she used X-ray crystallography to determine a molecular structure. “I was looking at something no-one else had looked at before. I was like, ‘Wow, how cool is that!’”

When Starbird does this kind of analysis, one key early step in the process is taking, say, a protein and working out how to grow crystals of it on a very small scale. Just as water forms ice crystals when it freezes, proteins can form very tiny crystals under certain conditions.

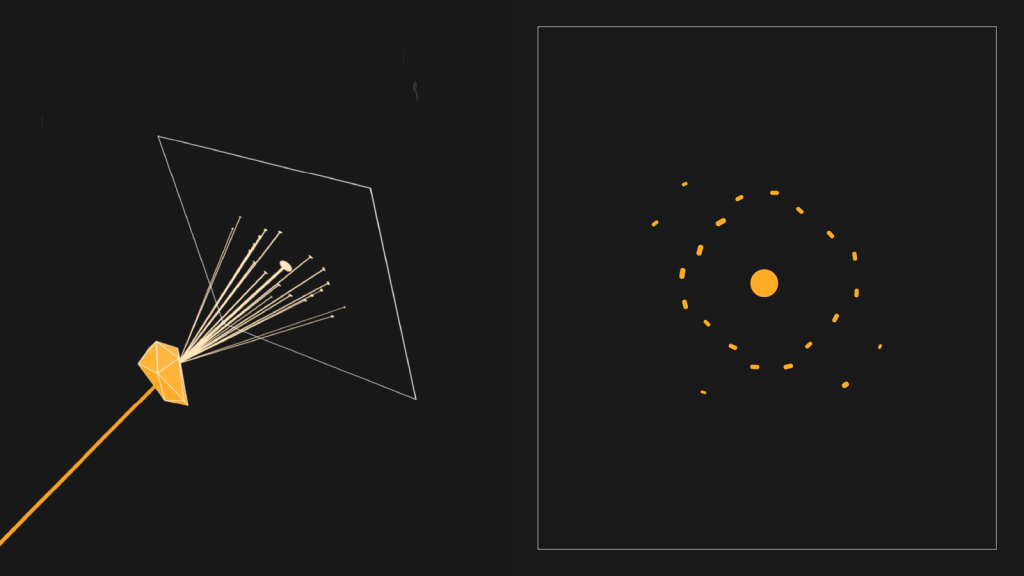

Those crystals are then harvested with tiny hair-like loops – which can be a very tricky procedure – and placed on an X-ray diffractometer.

The crystals are necessary because when you shine X-rays on their ordered structure, you get a regular diffraction pattern – precise markings specific to the chemical nature of the crystal in question.

However, proteins are much more complicated than water molecules so conditions must be just right for them to crystallise. Starbird may have to try hundreds of different approaches – using different chemicals, temperatures or humidity levels – before it works.

“I’m somebody who’s OK with delayed gratification,” she jokes.

One scientist who would probably have related to that was Dorothy Crowfoot Hodgkin. She spent 34 years using X-ray crystallography to work out the structure of insulin, beginning in the 1930s. Insulin is a hormone that helps control blood sugar levels but Type 1 diabetics are unfortunately unable to produce it.

In Hodgkin’s case, obtaining the insulin crystals wasn’t especially difficult. But because insulin contains no fewer than 788 atoms, it took a long time for her to map the entire structure using early X-ray crystallographic methods. Her achievement made it much easier to mass-produce insulin for the treatment of diabetes.

By the time Hodgkin had finally finished, in 1969, she had already been awarded the 1964 Nobel Prize in Chemistry for her X-ray crystallographic studies. She had also determined the structures of penicillin – an important antibiotic – and vitamin B12.

She died in 1994. At a memorial service the following year, Max Perutz – who also received a joint Nobel Prize in Chemistry for crystallographic work – said, “Her X-ray cameras bared the intrinsic beauty beneath the rough surface of things.” He praised both her kindness and her “iron will” to succeed.

“She was a terrific inspiration.”

Elspeth Garman at the University of Oxford, who knew Dorothy Crowfoot Hodgkin.

Garman describes an X-ray diffraction pattern as “an incredibly complicated reflection.”

The result is a pattern, which you can painstakingly convert into a topographical map of the structure, or a three-dimensional model.

Garman notes that many women have excelled at X-ray crystallography. She credits the Braggs, in part, for this. “They had the most amazing academic tree of women that they encouraged and took on as graduate students when people in other fields didn’t,” she says.

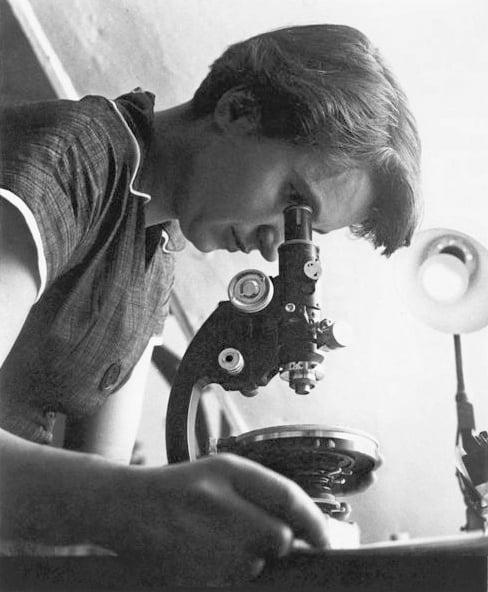

Besides Hodgkin, there was also Rosalind Franklin, whose X-ray diffraction image of DNA was among many findings she made while trying to work out the structure of DNA. Some of her findings influenced Francis Crick, James Watson and Maurice Wilkins in their own endeavours on this subject. They were awarded the Nobel Prize in Physiology or Medicine for their work in 1962. Many argue Franklin never received sufficient credit.

X-ray crystallography has also been involved in more recent Nobel Prize-awarded work – including the 2020 Nobel Prize in Chemistry for genome-editing technology, which has roots in crystallographic studies of RNA.

One hugely important application of X-ray crystallography is in drug discovery. It has helped scientists find drugs for sickle-cell disease and even certain cancers, for example.

Rob van Montfort, group leader in the Centre for Cancer Drug Discovery at the UK’s Institute of Cancer Research, says crystallography can reveal which compounds could block or control key proteins in the body, and thus treat a disease.

“X-ray crystallography… provides pictures showing how, exactly, the compound binds to the molecule,” he explains.

Recent technological developments have allowed increasingly complex crystallography studies, Garman says.

At Diamond Light Source, a science facility in the UK, staff use X-ray beamlines to check the medicinal potential of compounds at high speed, by analysing potential binding-sites on a given protein. “Overnight, you could look at 200,” says Garman. “It’s absolutely staggering.”

Researchers have also used this approach to study battery materials – a key technology for the transition away from fossil fuels. Phil Chater, crystallography science group leader at Diamond Light Source, says X-ray crystallography reveals how the materials inside batteries can degrade over time.

Lithium-ion batteries work by allowing lithium ions to travel between layers of material – that’s how they charge up and discharge energy.

“Maintaining that [layer] structure is very important for the prolonged life of these batteries,” Chater says.

But crystallography allows you to see, sometimes, how layers are changing, affecting the ability of the ions to move in and out, he adds. Scientists can then search for ways of overcoming the problem.

X-ray crystallography has clearly made waves in many fields. But there’s an elephant in the room, says Garman.

There’s also artificial intelligence (AI). If AI can accurately predict molecular structures, there may be less need to use X-ray crystallography for this task. But Starbird cautions that there are many structures AI doesn’t predict well.

“I think people have a misconception that crystallography might be done soon, because we have AI – we’re not even close to that,” she says.

The Braggs, presumably, would be glad to hear this. And X-ray crystallography devices may go on even more exciting adventures in the future. Velbel suggests sending one to a distant comet orbiting our sun.

“Wow. I’d want to see what comet ice looks like,” he says, explaining that we might find exciting mixtures of unusual compounds if we could study it up-close. “I think it would be fascinating.”

By Chris Baraniuk, BBC World Service. This content was created as a co-production between Nobel Prize Outreach and the BBC.

Published February 2026